This is the landing page for my project Erasure Code http://www.erasurecode.com

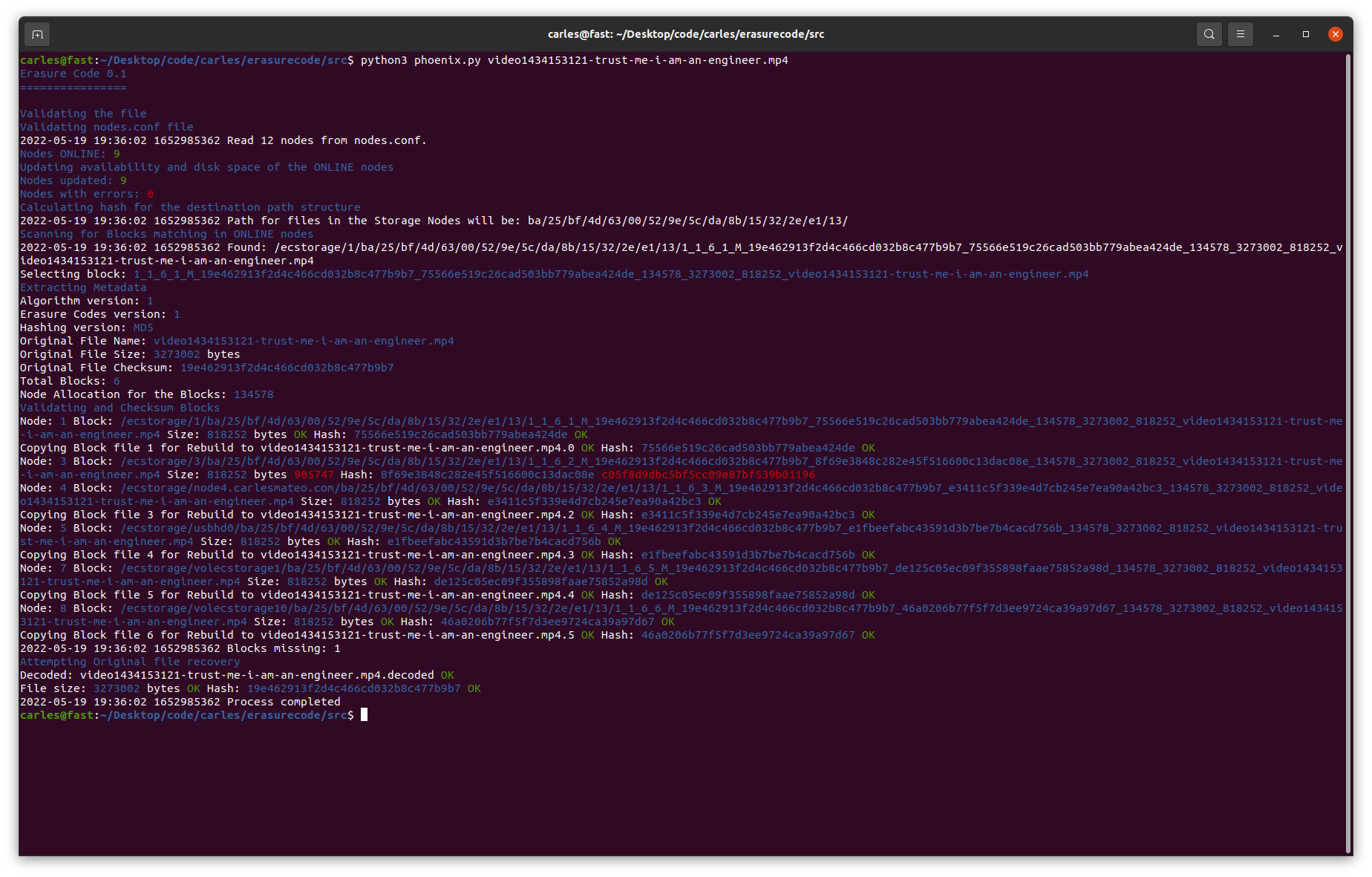

It’s a Storage System that provides infinite scaling ability with very lightly maintenance, with no single point of failure, and the Data broken in blocks encoding with Erasure Coding algorithms and distributed across different servers and even different Cloud Providers. Erasure Coding algorithms allow redundant informant, with the characteristic that the original information can be rebuilt with any combination of enough surviving blocks.

For example, if you want to encode 1GB file, using a configuration of 10 + 4, 14 blocks will be created, so a 40% additional redundant information will be added, and the original data can be re-encoded from any combination of 10 surviving blocks.

My project distributes each block across different Servers. In other words, you can afford to loss 4 Servers and you still can recover your data.

My project provides an amazing availability, is resilient to node failures, does not have any single point of failure, and does not require external software to operate. It’s designed in a way that makes very easy to recover the data in case of major disasters or outages.

Not only serves for storing and retrieving data, but serves Data in real time. Imagine that Youtube videos are stored using Erasure Code, and they are encoded on demand. The block files encoded are the Data and the Backup at the same time.

Solutions alike are used by technology giants, I’m bringing them available to all the companies.

Rules for writing a Comment