How to schedule snapshots for your instances in Google Cloud.

Be aware that snapshots may be scheduled automatically, and daily, by Google.

You may want to schedule weekly snapshots to be retained for 90 days or similar.

How to schedule snapshots for your instances in Google Cloud.

Be aware that snapshots may be scheduled automatically, and daily, by Google.

You may want to schedule weekly snapshots to be retained for 90 days or similar.

This is a mechanism I invented and I’ve been using for decades, to migrate or clone my Linux Desktops to other physical servers.

This script is focused on doing the job for Ubuntu but I was doing this already 30 years ago, for X Window as I was responsible of the Linux platform of a ISP (Internet Service Provider). So, it is compatible with any Linux Desktop or Server.

It has the advantage that is a very lightweight backup. You don’t need to backup /etc or /var as long as you install a new OS and restore the folders that you did backup. You can backup and restore Wine (Windows Emulator) programs completely and to/from VMs and Instances as well.

It’s based on user/s rather than machine.

And it does backup using the Timestamp, so you keep all the different version, modified over time. You can fusion the backups in the same folder if you prefer avoiding time versions and keep only the latest backup. If that’s your case, then replace s_PATH_BACKUP_NOW=”${s_PATH_BACKUP}${s_DATETIME}/” by s_PATH_BACKUP_NOW=”${s_PATH_BACKUP}” for instance. You can also add a folder for machine if you prefer it, for example if you use the same userid across several Desktops/Servers.

I offer you a much simplified version of my scripts, but they can highly serve your needs.

#!/usr/bin/env bash

# Author: Carles Mateo

# Last Update: 2022-10-23 10:48 Irish Time

# User we want to backup data for

s_USER="carles"

# Target PATH for the Backups

s_PATH_BACKUP="/home/${s_USER}/Desktop/Bck/"

s_DATE=$(date +"%Y-%m-%d")

s_DATETIME=$(date +"%Y-%m-%d-%H-%M-%S")

s_PATH_BACKUP_NOW="${s_PATH_BACKUP}${s_DATETIME}/"

echo "Creating path $s_PATH_BACKUP and $s_PATH_BACKUP_NOW"

mkdir $s_PATH_BACKUP

mkdir $s_PATH_BACKUP_NOW

s_PATH_KEY="/home/${s_USER}/Desktop/keys/2007-01-07-cloud23.pem"

s_DOCKER_IMG_JENKINS_EXPORT=${s_DATE}-jenkins-base.tar

s_DOCKER_IMG_JENKINS_BLUEOCEAN2_EXPORT=${s_DATE}-jenkins-blueocean2.tar

s_PGP_FILE=${s_DATETIME}-pgp.zip

# Version the PGP files

echo "Compressing the PGP files as ${s_PGP_FILE}"

zip -r ${s_PATH_BACKUP_NOW}${s_PGP_FILE} /home/${s_USER}/Desktop/PGP/*

# Copy to BCK folder, or ZFS or to an external drive Locally as defined in: s_PATH_BACKUP_NOW

echo "Copying Data to ${s_PATH_BACKUP_NOW}/Data"

rsync -a --exclude={} --acls --xattrs --owner --group --times --stats --human-readable --progress -z "/home/${s_USER}/Desktop/data/" "${s_PATH_BACKUP_NOW}data/"

rsync -a --exclude={'Desktop','Downloads','.local/share/Trash/','.local/lib/python2.7/','.local/lib/python3.6/','.local/lib/python3.8/','.local/lib/python3.10/','.cache/JetBrains/'} --acls --xattrs --owner --group --times --stats --human-readable --progress -z "/home/${s_USER}/" "${s_PATH_BACKUP_NOW}home/${s_USER}/"

rsync -a --exclude={} --acls --xattrs --owner --group --times --stats --human-readable --progress -z "/home/${s_USER}/Desktop/code/" "${s_PATH_BACKUP_NOW}code/"

echo "Showing backup dir ${s_PATH_BACKUP_NOW}"

ls -hal ${s_PATH_BACKUP_NOW}

df -h /

See how I exclude certain folders like the Desktop or Downloads with –exclude.

It relies on the very useful rsync program. It also relies on zip to compress entire folders (PGP Keys on the example).

If you use the second part, to compress Docker Images (Jenkins in this example), you will run it as sudo and you will need also gzip.

# continuation... sudo running required.

# Save Docker Images

echo "Saving Docker Jenkins /home/${s_USER}/Desktop/Docker_Images/${s_DOCKER_IMG_JENKINS_EXPORT}"

sudo docker save jenkins:base --output /home/${s_USER}/Desktop/Docker_Images/${s_DOCKER_IMG_JENKINS_EXPORT}

echo "Saving Docker Jenkins /home/${s_USER}/Desktop/Docker_Images/${s_DOCKER_IMG_JENKINS_BLUEOCEAN2_EXPORT}"

sudo docker save jenkins:base --output /home/${s_USER}/Desktop/Docker_Images/${s_DOCKER_IMG_JENKINS_BLUEOCEAN2_EXPORT}

echo "Setting permissions"

sudo chown ${s_USER}.${s_USER} /home/${s_USER}/Desktop/Docker_Images/${s_DOCKER_IMG_JENKINS_EXPORT}

sudo chown ${s_USER}.${s_USER} /home/${s_USER}/Desktop/Docker_Images/${s_DOCKER_IMG_JENKINS_BLUEOCEAN2_EXPORT}

echo "Compressing /home/${s_USER}/Desktop/Docker_Images/${s_DOCKER_IMG_JENKINS_EXPORT}"

gzip /home/${s_USER}/Desktop/Docker_Images/${s_DOCKER_IMG_JENKINS_EXPORT}

gzip /home/${s_USER}/Desktop/Docker_Images/${s_DOCKER_IMG_JENKINS_BLUEOCEAN2_EXPORT}

rsync -a --exclude={} --acls --xattrs --owner --group --times --stats --human-readable --progress -z "/home/${s_USER}/Desktop/Docker_Images/" "${s_PATH_BACKUP_NOW}Docker_Images/"

There is a final part, if you want to backup to a remote Server/s using ssh:

# continuation... to copy to a remote Server.

s_PATH_REMOTE="bck7@cloubbck11.carlesmateo.com:/Bck/Desktop/${s_USER}/data/"

# Copy to the other Server

rsync -e "ssh -i $s_PATH_KEY" -a --exclude={} --acls --xattrs --owner --group --times --stats --human-readable --progress -z "/home/${s_USER}/Desktop/data/" ${s_PATH_REMOTE}

I recommend you to use the same methodology in all your Desktops, like for example, having a data/ folder in the Desktop for each user.

You can use Erasure Code to split the Backups in blocks and store a piece in different Cloud Providers.

Also you can store your Backups long-term, with services like Amazon Glacier.

Other ideas are storing certain files in git and in Hadoop HDFS.

If you want you can CRC your files before copying to another device or server.

You will use tools like: sha512sum or md5sum.

Twitch stream on 2022-06-06 10:50 IST

In this very long session we went through actual errors in a ZFS pool, we check the Kernel, we remove and reinsert the drive, conduct zpool scrub… in the meantime I talked about Rack, Rack Servers, PSU, redundant components, ECC RAM…

I was contributing already but since the 2th of May I started my radio space, also streamed in Twitch, google Podcast, Apple, Spotify… in Radio America Barcelona.

My space is named The New Digital World (“el nou món digital”) and I talk about tech news, technology, videogames and handy tricks.

This content is in Catalan language only, so I added to the blog as ending in [CA]

For my university thesis I’ve been creating an Erasure Coding solution that allows to encode and distribute the files seamlessly across an universe of Servers in different cloud providers, balancing the disk space used, super easy to use, and resilient to disaster and recovery.

I created my project, named Erasure Code www.erasurecode.com as Open Source, so all size of companies will be able to benefit from this technology, only available to multinationals until now.

Here you can watch a presentation and a demo:

I hope this will help tons of companies and startup, hopefully scientific startups, to save costs and focus more in their business and to make a better world.

My final presentation was the 20th of May.

I’ve updated my book Python Combat Guide with few additions.

Currently is 405 pages DIN-A4 size plus gitlab downloadable code.

It can be downloaded as PDF DRM-free.

Updates to this version 1.08 2022-05-11:

My health is improving.

Thanks to my self discipline, following a good diet, taking the medicines… I’ve seen an spectacular improvement since I was sent urgently to he hospital with risk for my life.

I’ve very grateful that amazing doctors care of me.

I had some ups and downs and downs while pushing to finish my final project for the HDip in Computer Science Cloud Computing, but I managed to complete everything on time.

I had to travel to visit amazing specialists, and had to pay the expensive treatments, however everything worked and my health has improved drastically. I am very happy to count with additional source of income, like the teaching programming and my technical books, which helped me to be able to deal with all these sorts of unexpected expenses. I appreciate every single sale of my books, as it made me feel useful and appreciated when I was a bit low, and the nice details some of the readers had. Thanks.

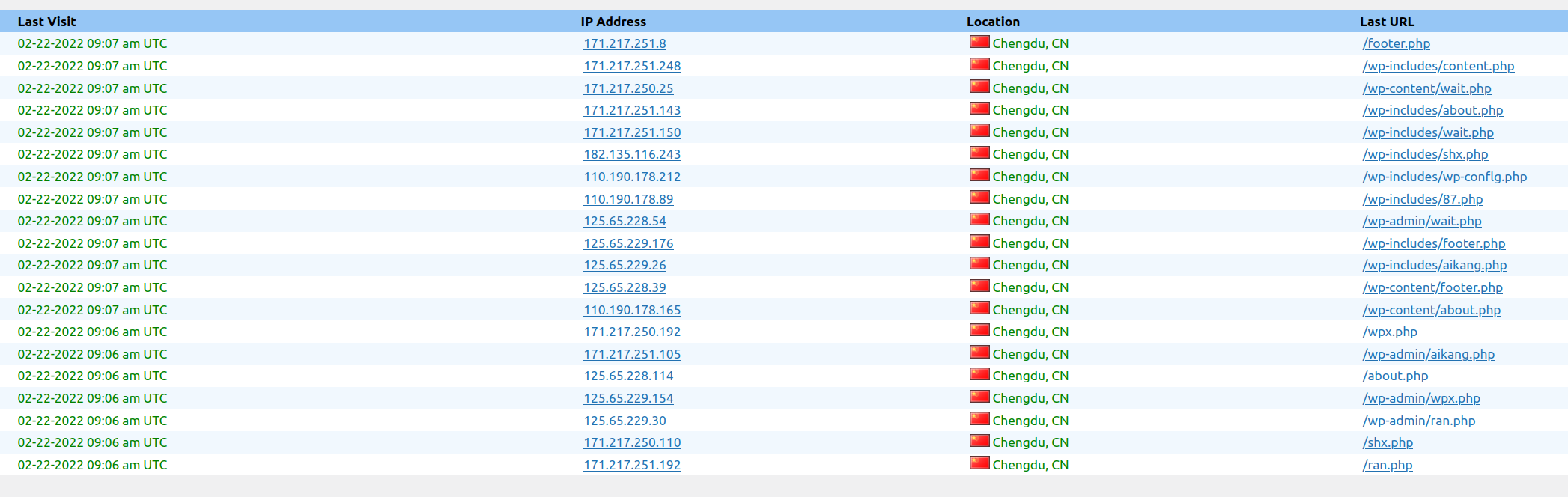

I’ve keep blocking in the Firewall any IP and that network coming to the blog from Russia and Belarus. I’ve blocked millions of IP Addresses so far.

I’ve also blocked the traffic coming from CSP when I detect an attack and the IP belongs to them. Most of the attacks were coming from Digital Ocean, after your-server.de and hetzer.de and finally Amazon. Curiously some attacks came from IPs from Microsoft.

I’ve blocked all these ranges of IPs, hundreds of thousands.

Despite blocking all these IPs from CSPs, the number of visitors keeps growing.

At the end my blog is for Engineers and for people, I don’t have interest in bots, and I don’t get any revenue from ads (I never added ads) so I’m perfectly happy with having less visitors, but being humans that find help in the blog.

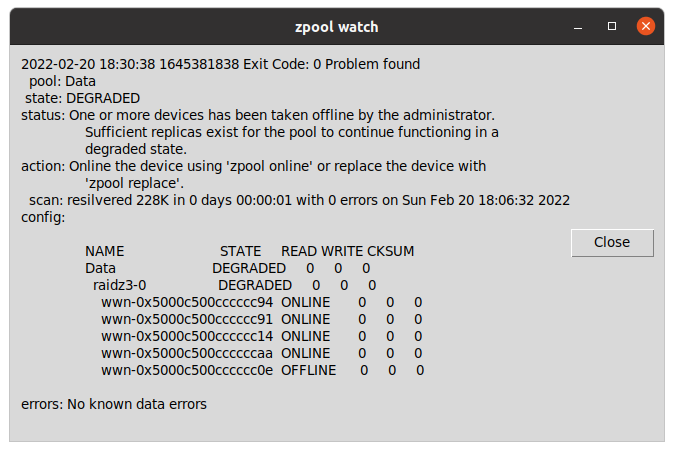

zpool watch is a small Python program for Linux workstations with graphical environment and ZFS, that checks every 30 seconds if your OpenZFS pools are Ok.

If a pool is not healthy, it displays a message in a window using tk inter.

Basically allows you to skip checking from the terminal zpool status continuously or to having to customize the ZED service to send an email and having to figure out how to it can spawn a window alert to the graphical system or what to do if the session has not been initiated.

Since last News from the Blog I’ve released carleslibs v.1.06, v.1.0.5 and v.1.0.4.

v.1.0.6 adds a new class OsUtils to deal with mostly-Linux Os tasks, like knowing the userid, the username, if it’s root, the distribution name and kernel version.

It also adds:

DatetimeUtils.sleep(i_seconds)

In v.1.0.5 I’ve included a new method for getting the Datetime in Unix Epoc format as Integer and increased Code Coverage to 95% for ScreenUtils class.

v. 1.0.4 contains a minor update, a method in StringUtils to escape html from a string.

It uses the library html (part of Python core) so it was small work to do for me to create this method, and the Unit Test for it, but I wanted to use carleslibs in more projects and adding it as core functionality, makes the code of these projects I’m working on, much more clear.

I’m working in the future v.1.0.7.

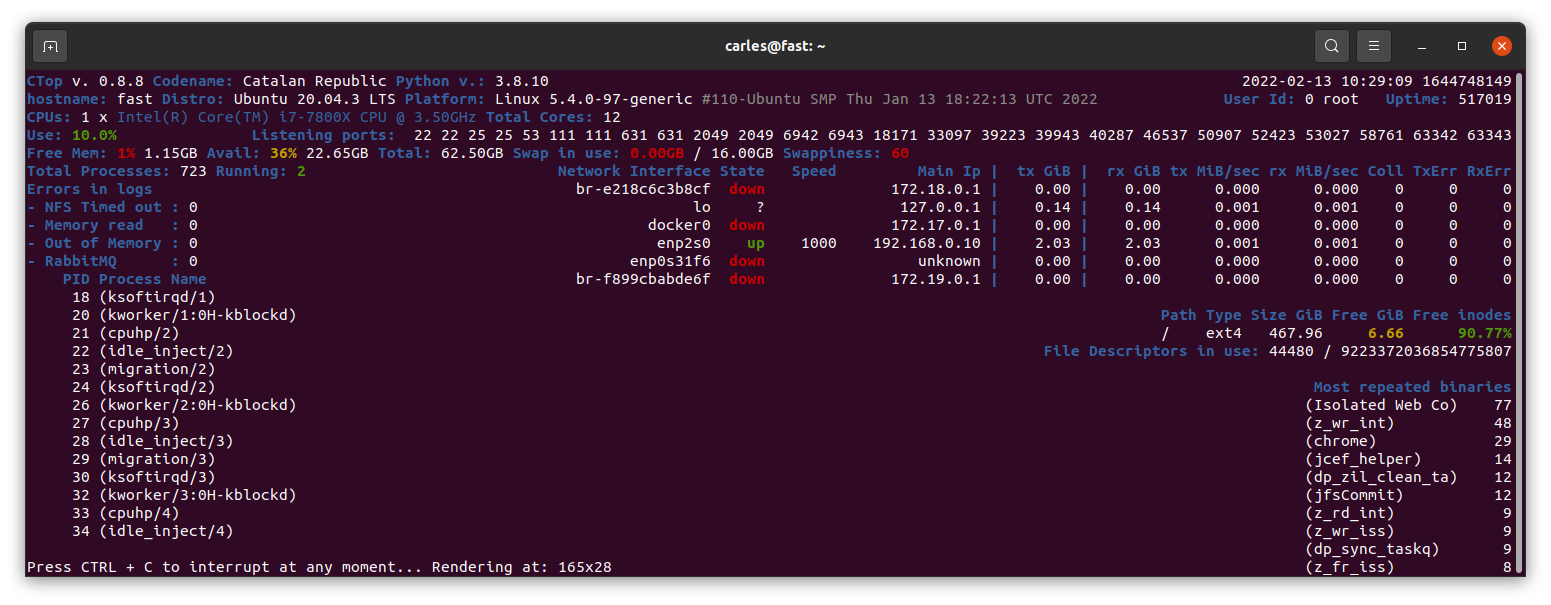

I released the stable version 0.8.8 and tagged it.

Minor refactors and adding more Code Coverage (Unit Testing), and protection in the code for division per zero when seconds passed as int are 0. (this was not an actual error, but is worth protecting the code just in case for the future)

Working on branch 0.8.9.

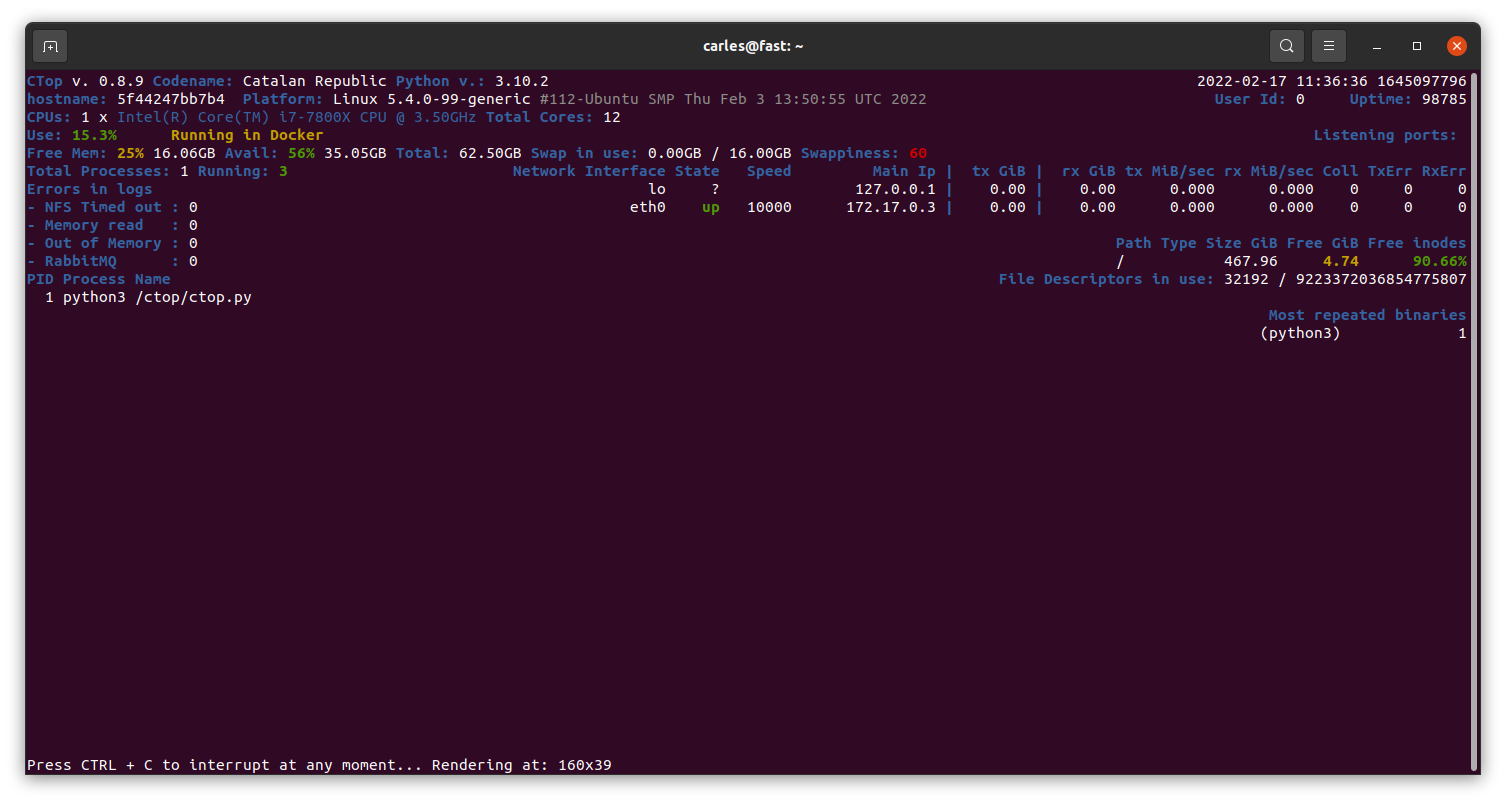

Currently in Master there is a stable version of 0.8.9 mainly fixing https://gitlab.com/carles.mateo/ctop/-/issues/51 which was not detecting when CTOP was running inside a Docker Container (reporting Unable to decode DMI).

Added 20 new pages with some tricks, like clearing the logs (1.6GB in my workstation), using some cool tools, using bind mounts and using Docker in Windows from command line without activating Docker Desktop or WSL.

https://leanpub.com/docker-combat-guide/

BTW if you work with Windows and you cannot use Docker Desktop due to the new license, in this article I explain how to use docker stand alone in Windows, without using WSL.

One of my SATA 2TB 2.5″ 5,400 rpm drive got damaged and so was generating errors, so that was a fantastic opportunity to show how to detect and deal with the situation to replace it with a new SATA 2TB 3.5″ 7,200 rpm and fix the pool.

So I updated my ZFS on Ubuntu 20.04 LTS book.

I’ve updated Python 3 Exercises for Beginners and added a new example of how to parse the <title> tag from an HTML page, using Beautifulsoup package, to the repository of Python 3 Combat Guide book.

I also added three new exercises, and solved them.

My friend Michela is translating the book to Italian. Thanks! :)

If you already purchased any of my books, you can download the updates of them when I upload them to LeanPub.

One of my students sent me this platform, which is kinda hackerrank, but oriented to video games. To solve code challenges by programming video games.

He is having plenty of fun:

https://www.codingame.com/start

If you enjoyed the Free Videos about Symfony, there is more.

https://symfonycast.com/screencast/api-platform

It talks about a bundle for building APIs.

And this tutorial explains in detail how to work with Webpack Encore:

https://symfonycasts.com/screencast/webpack-encore

A friend of mine, and colleague, Michela, is following this bootcamp and recommends it for people learning from ground 0.

https://udemy.com/course/100-days-of-code/

The company sent me the Stein, which is sent to the employees that serve for two years, with a recognition and a celebration called “The Circle of Honor”.

I bought this book as often I discover new ways, better, to explain the things to my students.

Sometimes I buy books for beginners, as I can get explained what I want to do super fast and some times they teach nice tricks that I didn’t know. I have huge Django books, and it took a lot to finish them.

A simpler book may only talk about how to install and work with it under a platform (Windows or Mac, as instance) but it is all that I require as the command to create projects are the same cross platform.

For example, you can get to install and to create a simple project with ORM, connected to the database, very quickly.

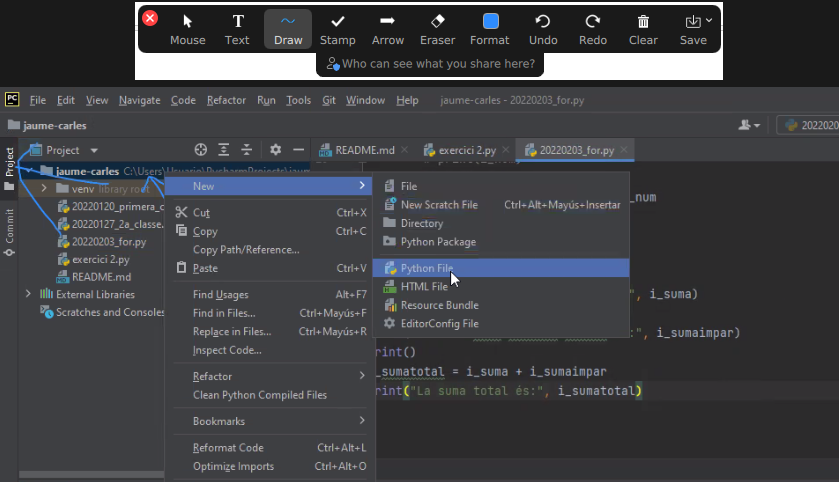

So I just discovered that Zoom has an option to draw in the shared screen, like Slack has. It is called Annotate. It is super useful for my classes. :)

Also discovered the icons in the Chat. It seems that not all the video calls accept it.

As Working From Home I needed an scanner, I looked in Amazon and all of them were costing more than €200.

I changed my strategy and I bought a All-In-One from HP, which costed me €68.

So I’ll have a scanner and a backup printer, which always comes handy.

The nightmare started after I tried to connect it with Ubuntu.

Ubuntu was not recognizing it. Checking the manuals they force to configure the printer from an Android/iPhone app or from their web page, my understanding is for windows only. In any case I would not install the proprietary drivers in my Linux system.

Annoyed, I installed the Android application, and it was requesting to get Location permissions to configure it. No way. There was not possible to configure the printer without giving GPS/Location permissions to the app, so I cancelled the process.

I grabbed a Windows 10 laptop and plugged the All-in-one through the USB. I ran the wizard to search for Scanners and Printers and was not unable to use my scanner, only to configure as a printer, so I was forced to install HP drivers.

Irritated I did, and they were suggesting to configure the printer so I can print from Internet or from the phone. Thanks HP, you’ll be the next SolarWinds big-security-hole. I said no way, and in order to use the Wifi I have to agree to open that security door which is that the printer would be connected to Internet permanently, sending and receiving information. I said no, I’ll use only via USB.

Even selecting that, in order to scan, the Software forces me to create an account.

Disappointing. HP is doing very big stupid mistakes. They used to be a good company.

Since they stopped doing the drivers in Barcelona years ago, their Software and solutions (not the hardware) went to hell.

I checked the reviews in the App Store and so many people gave them 1 star and have problems… what a shame the way they created this solution.

I made a donation to OpenShot Video Editor.

This is a great Open Source, multi-platform editor, so I wanted to support the creator.

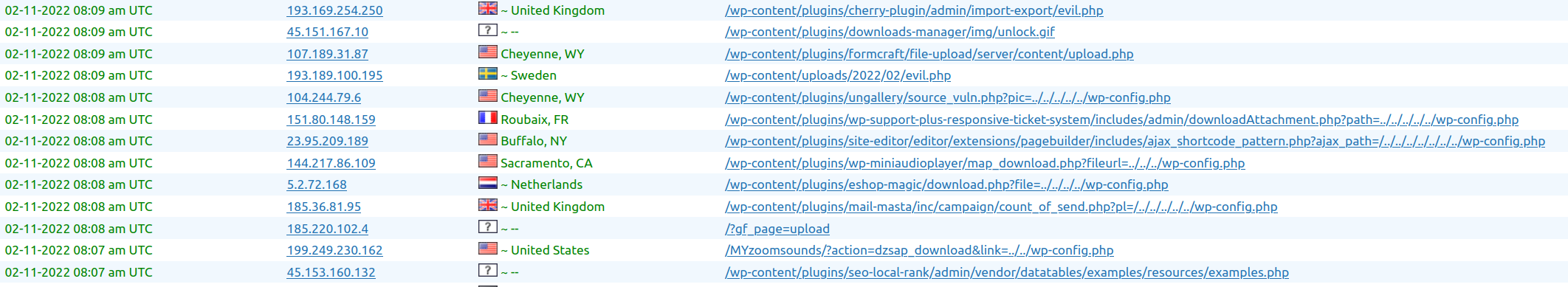

This is just a sample of a set of attacks to the blog in a 3 minutes interval.

Another one this morning:

Now all are blocked in the Firewall.

This is a non stop practice from spammers and pirates that has been going on for years.

It was almost three decades ago, when I was the Linux responsible of an ISP, and I was installing a brand new Linux system connected to a service called “infovia”, at the time when Internet was used with dial-up and modems, and in the interval of time of the installation, it got hacked. I had the Ethernet connected. So then already, this was happening.

The morning I was writing this, I blocked thousands of offending Ip Addresses.

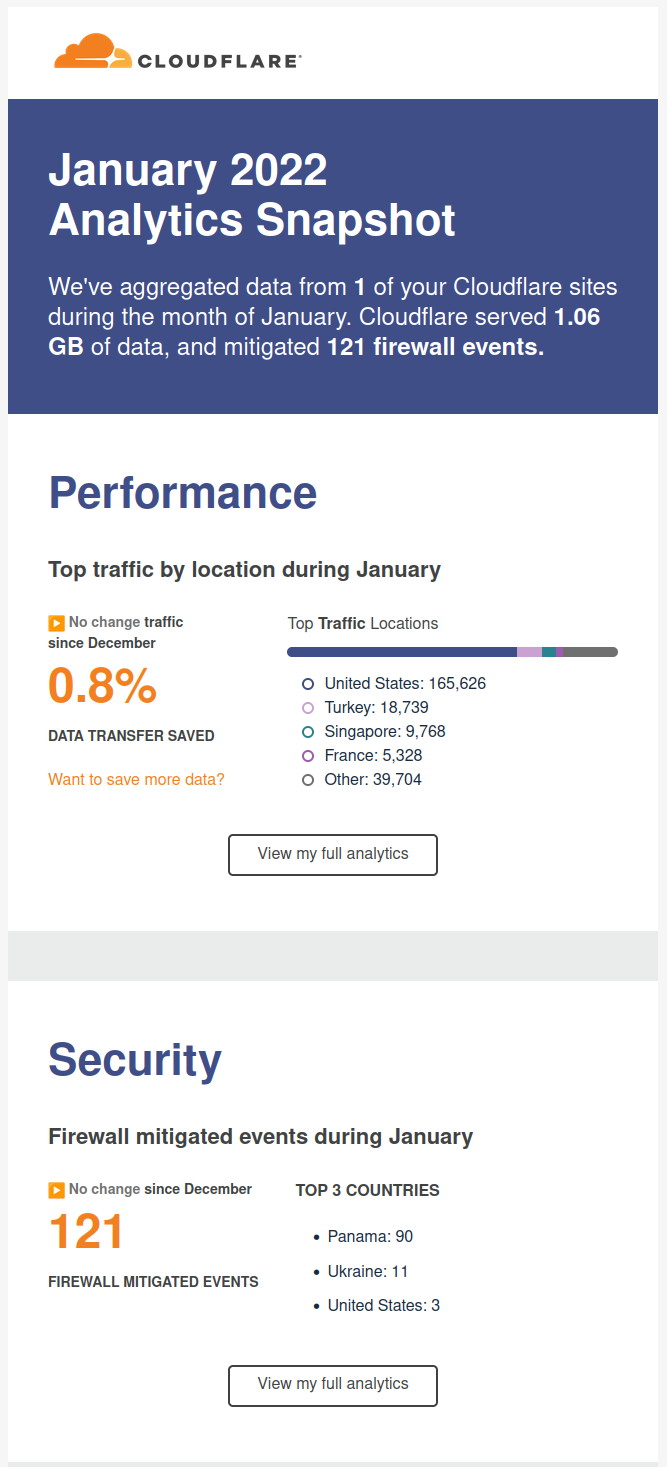

I recommend you to use CloudFlare, is a CDN/Cache/Accelerator with DoS protection and even in its Free version is really useful.

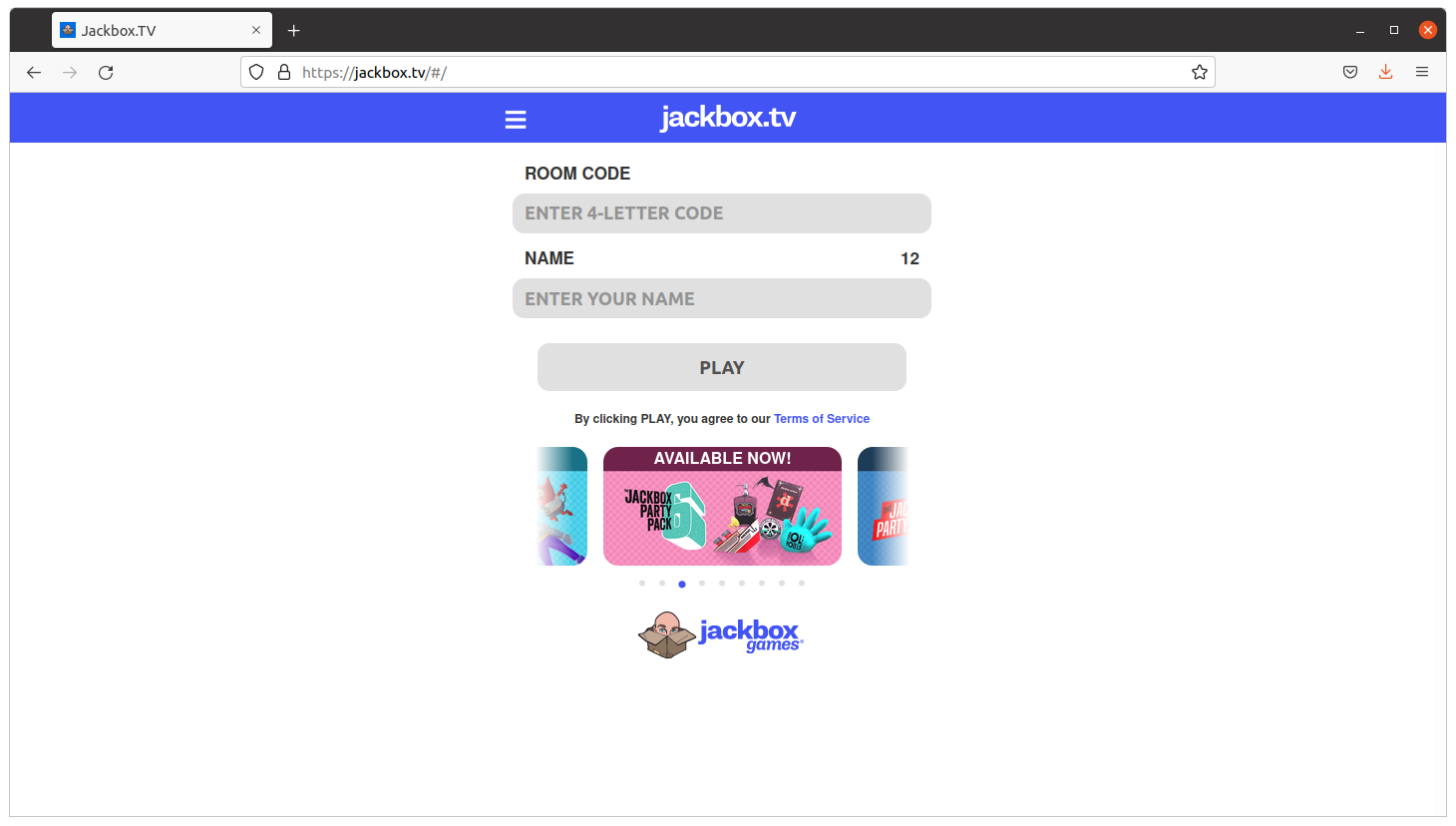

So I come with a game kind of Quiz that you can play with your friends, family or work colleagues working from home (WFH).

The idea is that the master shares screen and sound in Zoom, and then the rest connect to jackbox.tv and enter the code displayed on the master’s screen on their own browser, and an interactive game is started.

It is recommended that the master has two monitors so they can also play.

The games are so fun as a phrase appearing and people having to complete with a lie. If your friends vote your phrase, believing is true, you get points. If you vote the true answer, you get points too.

Very funny and recommendable.

<humor>Skynet sent another terminator to end me, but I terminated it. Its processor lays exhibited in my home now</humor>

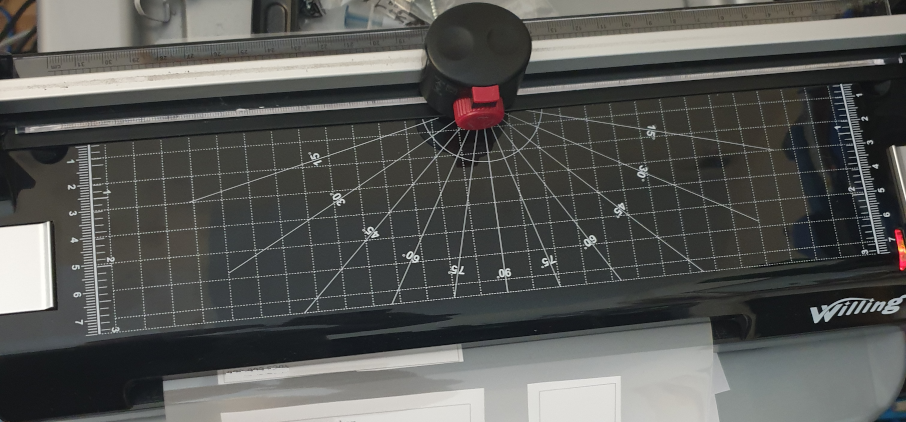

I bought a laminator.

It has also a ruler and a trimmer to cut the paper.

It was only €39 and I’ve to say that I’m very happy with the results.

It takes around 5 minutes to be ready, it takes to get to the hot-enough temperature, and feeds the pages slowly, around 50 secs a DIN-A4, but the results are worth the time.

I’ve protected my medical receipts and other value documents and the work was perfect. No bubbles at all. No big deal if the plastic covers are introduced not 100% straight. Even if you pass again an already plasticized document, all is good.

One of my friends sent me this image.

It is old, but still it’s fun. So it assumes the cameras of the parking or speed cameras, will OCR the plate to build a query, and that the code is not well protected. So basically is exploiting a Sql Injection.

Anybody working on the systems side, and with databases, knows how annoying are those potential situations.

mysql> UPDATE wp_options set option_value='blog.carlesmateo.local' WHERE option_name='siteurl'; Query OK, 1 row affected (0.02 sec) Rows matched: 1 Changed: 1 Warnings: 0

This way I set an entry in /etc/hosts and I can do all the tests I want.

Is in the main page, just after the recommended articles.

Here you can see the source code.

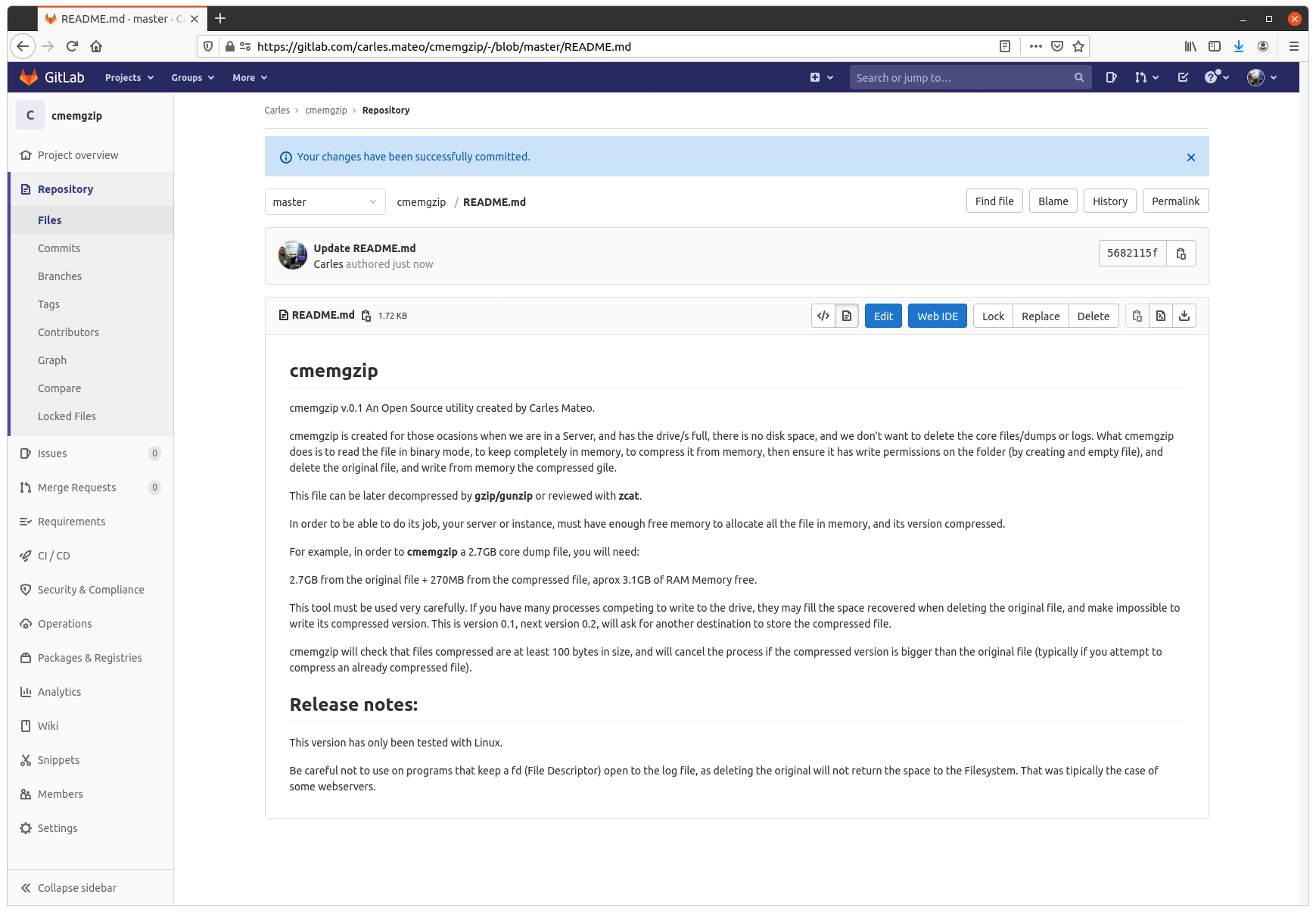

All the Operation Engineers and SREs that work with systems have found the situation of having a Server with the disk full of logs and needing to keep those logs, and at the same time needing the system to keep running.

This is an uncomfortable situation.

I remember when I was being interviewed in Facebook, in Menlo Park, for a SDM position in the SRE (Software Development Manager) back in 2013-2014. They asked me about a situation where they have the Server disk full, and they deleted a big log file from Apache, but the space didn’t come back. They told me that nobody ever was able to solve this.

I explained that what happened is that Apache still had the fd (file descriptor), and that he will try to write to end of that file, even if they removed the huge log file with rm command, from the system they will not get back any free space. I explained that the easiest solution was to stop apache. They agreed and asked me how we could do the same without restarting the Webserver and I said that manipulating the file descriptors under /proc. They told me what I was the first person to solve this.

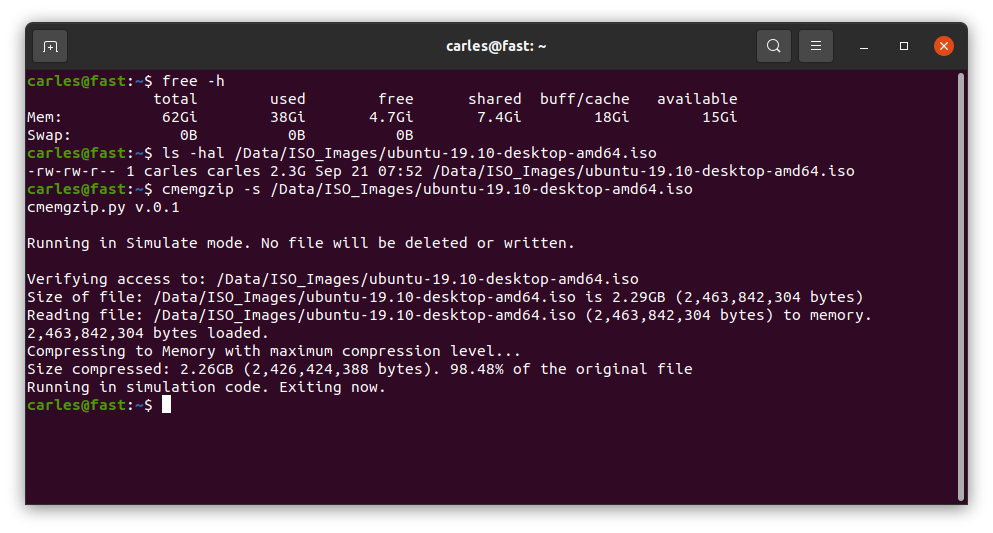

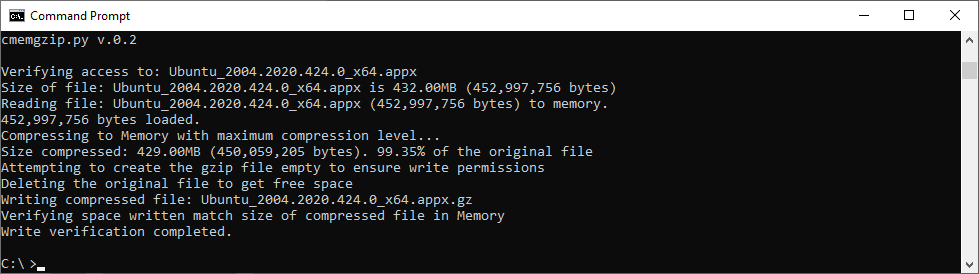

Basically cmemgzip will read a file, as binary, and will load it completely in to Memory.

Then it will compress it also in Memory. Then it will release the memory used to keep the original, will validate write permissions on the folder, will check that the compressed file is smaller than the original, and will delete the original and, using the new space now available in disk, write the compressed and smaller version of the file in gzip format.

Since version 0.3 you can specify an amount of memory that you will use for the blocks of data read from the file, so you can limit greatly the memory usage and compress files much more bigger than the amount of memory.

If for whatever reason the gz version cannot be written to disk, you’ll be asked for another route.

I mentioned before about File Descriptors, and programs that may keep those files open.

So my advice here, is that if you have to compress Apache logs or logs from a multi-thread program, and disk is full, and several instances may be trying to write to the log file: to stop Apache service if you can, and then run cmemgzip. I want to add it the future to auto-release open fd, but this is delicate and requires a lot of time to make sure it will be reliable in all the circumstances and will obey the exact desires of the SRE realizing the operation, without unexpected undesired side effects. It can be implemented with a new parameter, so the SysAdmin will know what is requesting.

You can decompress it later with gzip/gunzip.

So about cmemgzip you can git clone the project from here:

https://gitlab.com/carles.mateo/cmemgzip

git clone https://gitlab.com/carles.mateo/cmemgzipThe README.md is very clear:

https://gitlab.com/carles.mateo/cmemgzip/-/blob/master/README.md

The program is written in Python 3, and I gave it License MIT, so you can use it and the Open Source really with Freedom.

This is a version 0.3.

I have only tested it in:

It should work in all the platforms supporting Python, but if you want to contribute testing for other platforms, like Windows 32 bit, Solaris or BSD, let me know.

You can create a ramdisk and compress it to there. Then delete the original and move the compressed file from ramdisk to the hard drive, and unload the ramdrive Kernel Module. However we find very often with this problems in Docker containers or in instances that don’t have the Kernel module installed. Is much more easier to run cmemgzip.

Another strategy you can do for the future is to have a folder based on ZFS and compression. Again, ZFS should be installed on the system, and this doesn’t happen with Docker containers.

cmemgzip is designed to work when there is no free space, if there is free space, you should use gzip command.

In a real emergency when you don’t have enough RAM, neither disk space, neither the possibility to send the log files to another server to be compressed there, you could stop using the swap, and fdisk the swap partition to be a ext4 Linux format, format it, mount is, and use the space to compress the files. And after moving the files compressed to the original folder, fdisk the old swap partition to change type to Swap again, and enable swap again (swapon).

As you can imagine, the weak point of cmemgzip, is that, if the file is completely loaded into memory and then compressed, the requirements of free memory on the Server/Instance/VM are at least the sum of the size of the file plus the sum of the size of the file compressed. You guess right. That’s true.

If there is not enough memory for loading the file in memory, the program is interrupted gracefully.

I decided to keep it simple, but this can be an option for the future.

So if your VM has 2GB of Available Memory, you will be able to use cmemgzip in uncompressed log files around 1.7GB.

In version 0.3 I implemented the ability to load chunks of the original file, and compress into memory, so I would be able use less memory. But then the compression is less efficient and initial tests point that I’ll have to keep a separate file for each compressed chunk. So I will need to created a uncompress tool as well, when now is completely compatible with gzip/gunzip, zcat, the file extractor from Ubuntu, etc…

For a big Server with a logfile of 40TB, around 300GB of RAM should be sufficient (the Servers I use have 768 GB of RAM normally).

Honestly, nowadays we find ourselves more frequently with VMs or Instances in the Cloud with small drives (10 to 15GB) and enough Available RAM, rather than Servers with huge mount points. This kind of instances, which means scaling horizontally, makes more difficult to have NFS Servers were we can move those logs, for security.

So cmemgzip covers very well some specific cases, while is not useful for all the scenarios.

I think it’s safe to say it covers 95% of the scenarios I’ve found in the past 7 years.

cmemgzip will not help you if you run out inodes.

Usage is very simple, and I kept it very verbose as the nature of the work is Operations, Engineers need to know what is going on.

I return error level/exit code 0 if everything goes well or 1 on errors.

./cmemgzip.py /home/carles/test_extract/SherlockHolmes.txt cmemgzip.py v.0.1 Verifying access to: /home/carles/test_extract/SherlockHolmes.txt Size of file: /home/carles/test_extract/SherlockHolmes.txt is 553KB (567,291 bytes) Reading file: /home/carles/test_extract/SherlockHolmes.txt (567,291 bytes) to memory. 567,291 bytes loaded. Compressing to Memory with maximum compression level… Size compressed: 204KB (209,733 bytes). 36.97% of the original file Attempting to create the gzip file empty to ensure write permissions Deleting the original file to get free space Writing compressed file /home/carles/test_extract/SherlockHolmes.txt.gz Verifying space written match size of compressed file in Memory Write verification completed.

You can also simulate, without actually delete or write to disk, just in order to know what will be the

There are no third party libraries to install. I only use the standard ones: os, sys, gzip

So clone it with git in your preferred folder and just create a symbolic link with your favorite name:

sudo ln --symbolic /home/carles/code/cmemgzip/cmemgzip.py /usr/bin/cmemgzip

I like to create the link without the .py extension.

This way you can invoke the program from anywhere by just typing: cmemgzip

This is an answer that I did to a question in askubuntu.

Question:

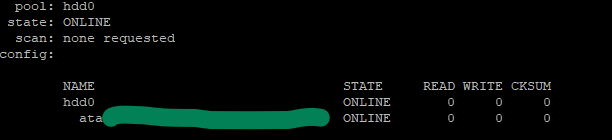

I have one HDD formatted as single disc zfs system on my server. It looks like the following:

Now I want to convert this to a zfs mirror without formatting the original disk. Any ideas?

Result should be something like:

hdd0

mirror0

ata-........................

ata-........................Answer:

I reproduced your case in a VM and paste here step by step. :)

Note: First of all, please do a backup of your data. I added an empty new disk, so ZFS had no doubt what was the master drive. Although you should have no problem as the first drive already forms part of the pool, a backup is recommended.

Quick answer: You need the zpool attach command.

Basically:

sudo zpool attach hdd0 existinghdd blankhdd |

After, do:

zpool status |

And you will see that a mirror has been created. Your data on the already existing drive will be keep, and will be replicated to the new one (Resilvered).

As ZFS only copys the actual information this process will take more or less depending on the amount of Data.

In my VM 300 GB were replicated in 3 seconds, while my experience with SAS and SATA drives, I was Resilvering 10 TB in less than 24 hours (for that I was using drives from 10TB to 14TB SAS) .

Now the long answer with everything I did in my Virtual Box VM:

lsblk --scsi |

identify the two empty drives by:

| ls /dev/disk/by-id/ |

Select one of them and create a pool like your: sudo zpool create hdd0 id_of_mydrive

See that pool /hdd0 has been created and mounted on root.

sudo zpool status sudo zpool list sudo ls -al /hdd0 |

Fill with some random data (or better copy files there) to generate a drive like data like you. I generated from random:

| sudo dd id=/dev/urandom of=/hdd0/file.000 bs=1M count=100 status=progress sudo dd id=/dev/urandom of=/hdd0/file.001 bs=1M count=100 status=progress sudo dd id=/dev/urandom of=/hdd0/file.002 bs=1M count=100 status=progress |

Then I got the checksum and saved to verify later.

sudo su # Please note I continue as root sha512sum file.000 > file.000.sha512 sha512sum file.001 > file.001.sha512 sha512sum file.002 > file.002.sha512 |

zpool list shows nearly 100GB of space.

zpool attach hdd0 id_of_mydrive id_of_the_drive_to_add |

zpool status will show:

pool: hdd0 state: ONLINE scan: resilvered 301M in 0 days 00:00:03 with 0 errors…NAME STATE READ WRITE CKSUMhdd0mirror-0ata-VBOX_HARDDISK_VBa8... ONLINE 0 0 0ata-VBOX_HARDDISK_VB8c... ONLINE 0 0 0errors: No known data errors

I verified the checksums.

zpool list will return as well 99GB of space available, as two drives of 100GB are being used in mirror.

So as kaulex mentioned the format is: zpool attach

Where device is your previous vdev with data (the single hard drive with Data in the ZFS pool named ‘hdd0’).

As I did you want to use the Id of the device and not the name, so you will use the identifier in /dev/disk/by-id/ and not sdb, sdc… (Please note, adding /dev/ is not necessary). The reason to do not use device names like sdb, sdc, sdea, etc… is that those names may change why live is running or between reboots. The id never changes. In real systems, not Virtual Box, they may start by wwn or ata.

This is the 3D tree that I bought, which is programmable in Python :)

https://klarasystems.com/learning/webinars/best-practices-for-optimizing-zfs1/

Is a video from klarasystems about best practices for ZFS.

https://developer.confluent.io/

https://developer.confluent.io/learn-kafka/

I read with surprise that Comcast is capping the Internet use to 1.2TB per month, and that they will be charging excess.

So… if I contract a Backup with Carbonite or BackBlaze or DropBox or another company and I backup my 10TB files, Comcast will ruin me charging excesses…

Or if I work from home, or the family watches a lot of Netflix…

I can only thinK on their Cast Strategy of CastNumberOfClientsToBankrupcy.

A joke to indicate that I think they will loss clients.

Imagine yesterday I downloaded two images of Ubuntu, being 5 GB, installed Call of Duty in one computer 180 GB, installed few Xbox games 400 GB, listened to Spotify 10 Gb, watched youtube 3 GB, watched Netflix 4 GB, so 602 GB in one day.

Not counting the bandwidth WFH (Working from Home).

Not counting Windows Updates, TV updates, consoles updates, Android Updates, Ubuntu updates…

And this is done in the middle of the covid-19 pandemic, with so many people lock down at home, playing video games, watching movies, and requiring desperately distractions.

<irony>Well done Comcast!</irony>

The small one (6 drives) fits perfectly in one ATX Bay, however, the SAS SSD are too height to fit.

I fit 1 SATA3 SSD 1TB and 4 SATA3 HDD 2TB.

The other one, the 3 Bay 5.25″ SAS/SATA enclosure for 12 drives did not fit in the Corsair Obsidian Series 750D case, and I had to install it outside. Doing a DIY, as I explain in my book about assembling, fixing and upgrading your own PCs and laptops.

However the 12 Gbps SAS SSD were returning Checksum errors in ZFS when I did copy information or I ran scrub. I’m afraid the enclosure can only provide 6 Gbps at max, or a poor connection. Cables or expanders use to be the reason. I ordered new cables to make a direct connection to the HBA Controller without the enclosure to validate my theory and the drives stopped showing errors.

There is something good in all bad: I have been able to document and explain how to troubleshoot, actual errors in ZFS, in my book and talk about the problems with the cables, and the advantages of using a SAS controller even if you use SATA drives.

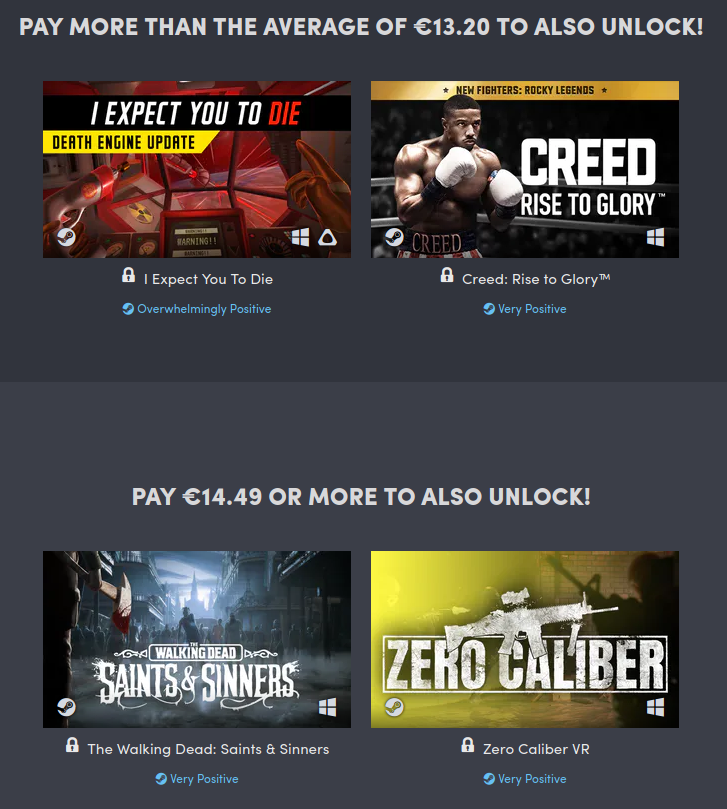

There is an offer with Microsoft Pass which is that we can use Disney+ for free during 30 days.

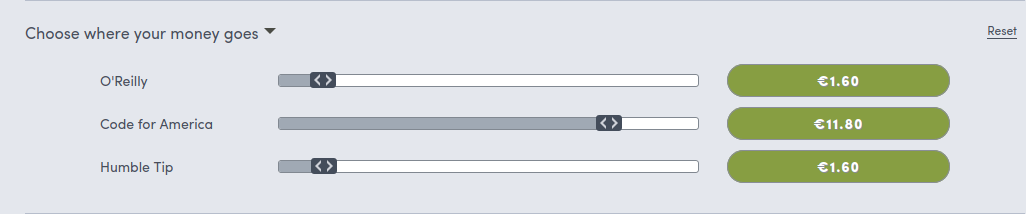

Don’t forget to balance how much of your contribution goes to every player.

Unfortunately by default most of the money goes to O’Reilly and Humble Tip and few to the Charity cause. You can change that from the web when going to to the payment.