I encountered that Server, Xeon, 128 GB of RAM, with those 58 Spinning drives 10 TB and 2 SSD of 2 TB each, where I was testing the latest version of my Software.

Monitoring long term tests, data validation, checking for memory leaks…

I notice the Server is using 70 GB of RAM. Only 5.5 GB are used for buffers according to the usual tools (top, htop, free, cat /proc/meminfo, ps aux…) and no programs are eating that amount, so where is the RAM?.

The rest of the Servers are working well, including models: same mode, 4U60 with 64 GB of RAM, 4U90 with 128 GB and All-Flash-Array with 256 GB of RAM, only using around 8 GB of RAM even under load.

iSCSI sharings being used, with I/O, iSCSI initiators trying to connect and getting rejected, several requests for second, disk pulling, and that usual stuff. And this is the only unit using so many memory, so what?.

I checked some modules to see memory consumption, but nothing clear.

Ok, after a bit of investigation one member of the Team said “Oh, while you was on holidays we created a Ramdisk and filled it for some validations, we deleted that already but never rebooted the Server”.

Ok. The easy solution would be to reboot, but that would had hidden a memory leak it that was the cause.

No, I had to find the real cause.

I requested assistance of one my colleagues, specialist, Kernel Engineer.

He confirmed that processes were not taking that memory, and ask me to try to drop the cache.

So I did:

sync

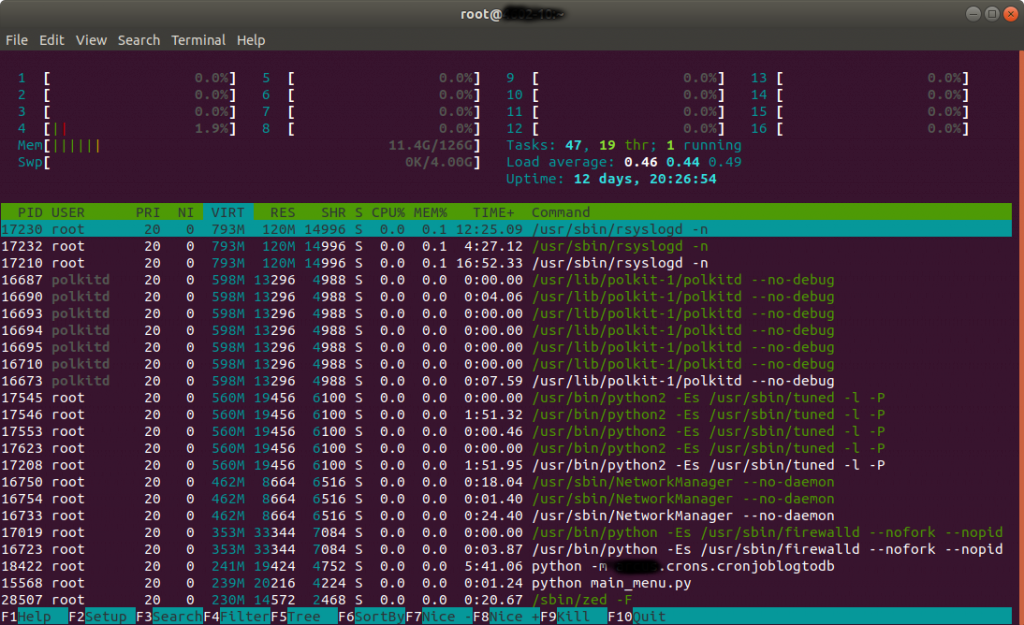

echo 3 > /proc/sys/vm/drop_cachesThen the memory usage drop to 11.4 GB and kept like that while I maintain sustained the load.

That’s more normal taking in count that we have 16 Volumes shared and one host is attempting to connect to Volumes that do not exist any more like crazy, Services and Cronjobs run in background and we conduct tests degrading the pool, removing drives, etc..

After tests concluded memory dropped to 2 GB, which is what we use when we’re not under load.

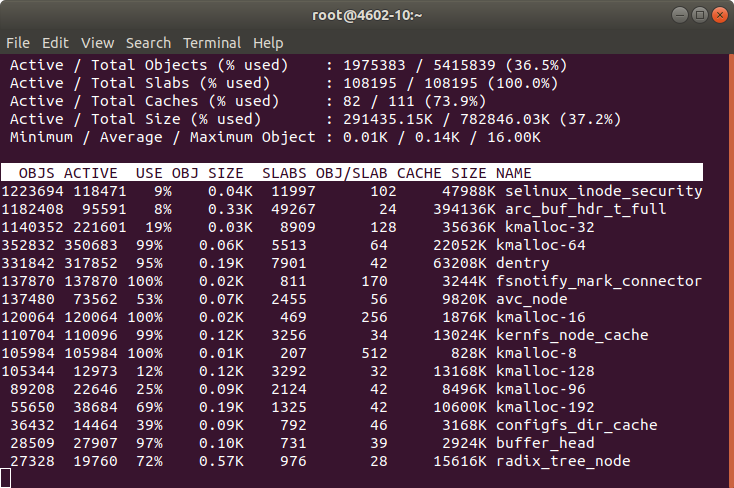

Note: In order to know about the memory being used by Kernel slab cache in real time you can use command:

slabtop

sudo vmstat -m