| Aquest és el guió per al proper programa El nou món digital a Ràdio Amèrica Barcelona, que s’emet els Dilluns a les 10:30 Ireland Time / 11:30 Zona horària Catalunya / 02:30 Pacific Time. Disclaimer: Treballo per a Activision Blizzard. Totes les opinions són meves i no representen cap companyia. This is the excerpt of my radio program at Radio America Barcelona that airs on Mondays 10:30 Irish Time / 11:30 Catalonia Time / 02:30 Pacific Time. Disclaimer: I work for Activision Blizzard. Opinions are my own. My opinions do not represent any company. |

Aquesta és la pàgina del proper programa de la setmana del 31 d’Octubre de 2022.

This is the page of the upcoming program of 31th October’s 2022 week.

Actualitat

- Elon Musk ja és oficialment propietari de Twitter.

- I ja ha anunciat acomiadaments.

- https://www.engadget.com/elon-musk-reportedly-orders-layoffs-at-twitter-221121536.html

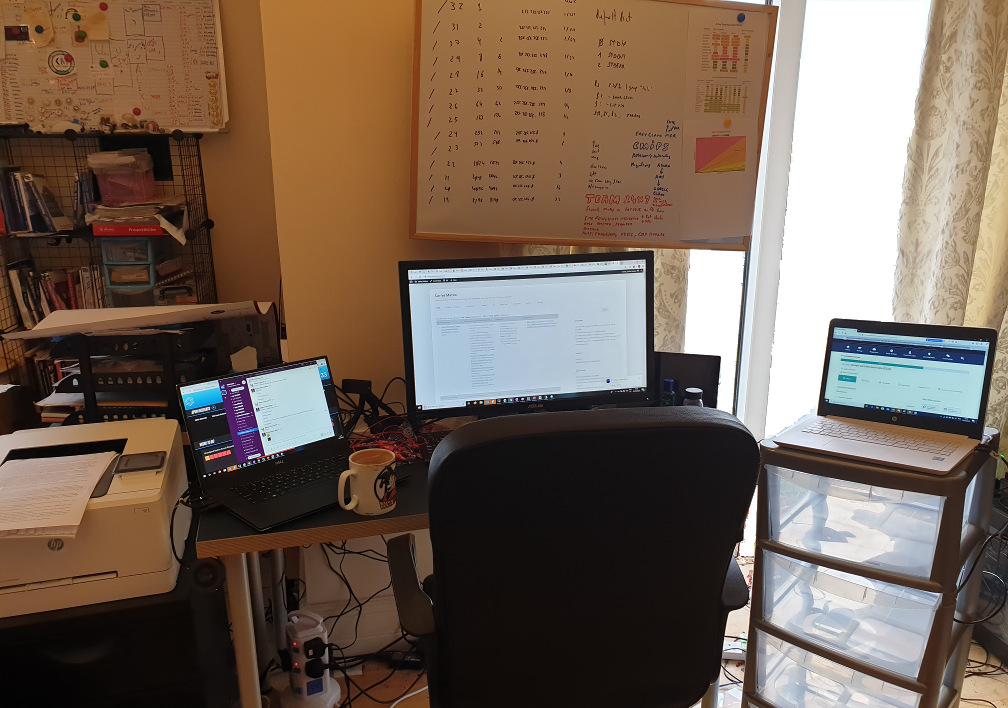

Trucs treballant amb portàtil

- Un truc molt senzill: si treballeu força hores amb el portàtil, us recomano que us compreu un monitor extern, un teclat i un ratolí.

- El vostre coll, la vostra esquena, i la vostra visió us ho agrairan. :)

- Un monitor de 22″ Full HD o superior us hauria d’anar séper bé.

Trucs de telefonia

- El proppassat 25 d’Octubre WhatsApp va patir caigudes a tot el món.

- Us suggereixo que tingueu una alternativa per si WhatsApp cau. Per exemple Telegram.

- Per a estalviar bateria del mòbil sense perdre prestacions, quan no l’hem d’utilitzar podem desactivar el Bluetooth i el Wifi.

- També podem activar una opció anomenada Estalvi d’Energia o Power saving, que redueix la brillantor de la pantalla entre d’altres optimitzacions que faran que la nostra bateria doni molt més de sí.

- Pots escoltar els Whatsapps sense que els hagi d’escoltar ningú més, simplement donant-li al botó de play i posant-te el mòbil a la orella com si parlessis.

- Els mòbils tenen uns sensors que detecten quan el mòbil és contra una superfície.

- Bloquegeu sempre la pantalla abans de guardar el mòbil a la butxaca (per a evitar que s’activin coses sense voler)

- Les noves tendències són carregar el mòbil cada cop més ràpid. El nou model de Xiaomi Redmi Note 12 carrega la bateria de 4.300mAh en 9 minuts.

- El model 120W Hypercharge necessita 15 minuts

- Igual que el 150W SuperVOOC d’Oppo

- Són mòbils amb unes prestacions excel·lents sobre els USD $300

- https://www.engadget.com/redmi-note-12-discovery-edition-210w-hypercharge-064020788.html

Vehicles elèctrics

- La Unió Europea està treballant en prohibir la venda de cotxes i furgonetes de benzina a partir del 2035.

Entreteniment

- Apple ha apujat el preu de Apple Music and Apple TV+

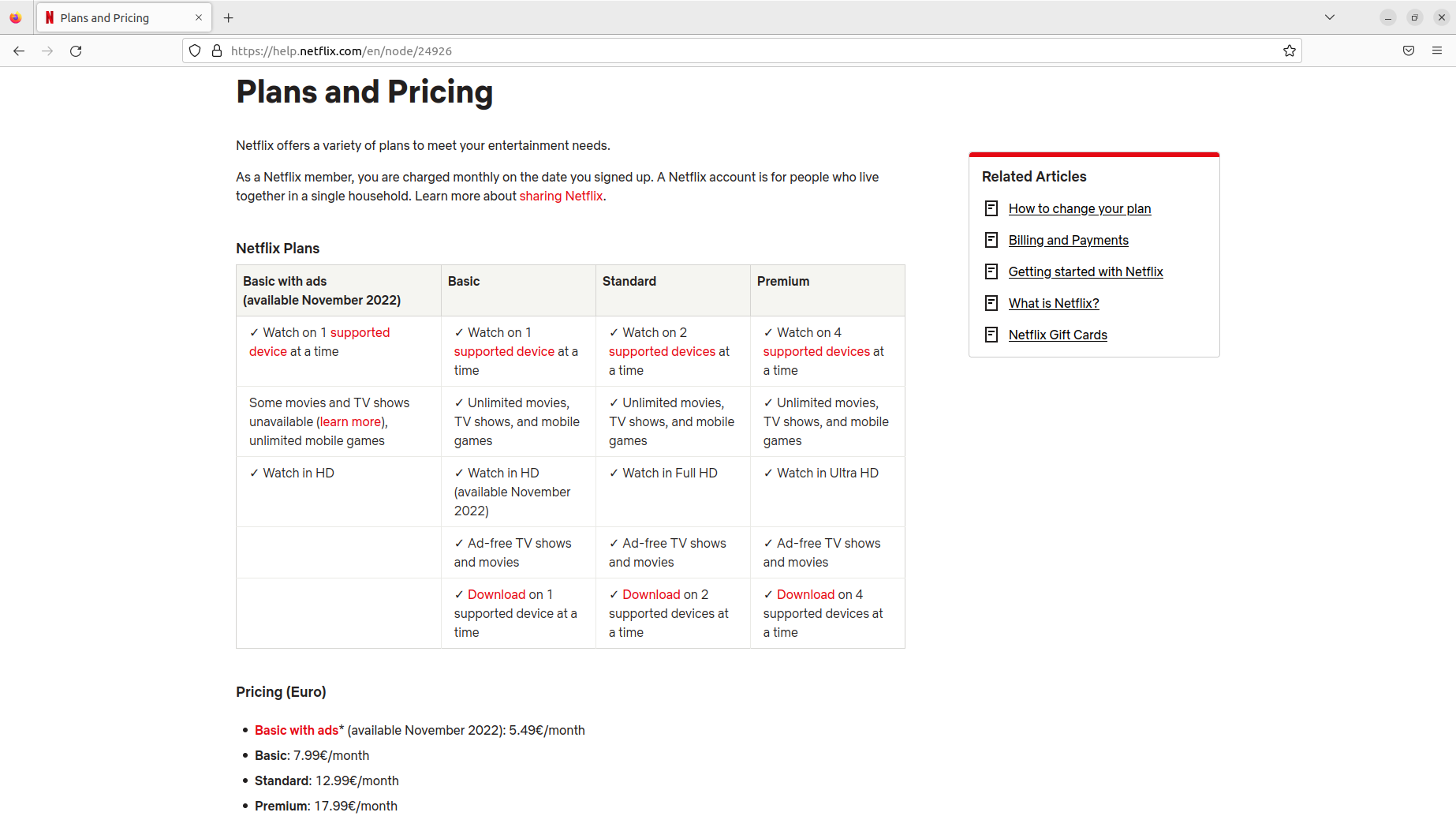

- Netflix estrenarà el 3 de Novembre el seu mode de visualització, més econòmic, amb anuncis, per 5,49 € al mes a Catalunya

- Les ulleres de realitat virtual de Sony Playstation 5, les VR2, prometen realment ser molt divertides.

- Altres companyies com Facebook / Meta estan treballant en oferir experiències de realitat virtual molt positives i estan creant cascos de realitat virtual més potents i assequibles. (Meta va comprar Oculus i ara creen cascos de realitat virtual)

Mac

- El nou Apple iPad pro, amb el xip M2, ha estat analitzat per diversos experts, i en parlen molt molt bé. Especialment de la velocitat d’execució que te.

- Fins i tot editar vídeo a 8K.

Pel·lícules / Sèries

- No és nova, però si us va agradar Karate Kid, us recomano la sèrie Cobra Kai, de Netflix, per a veure en família.

Per a següents programes…

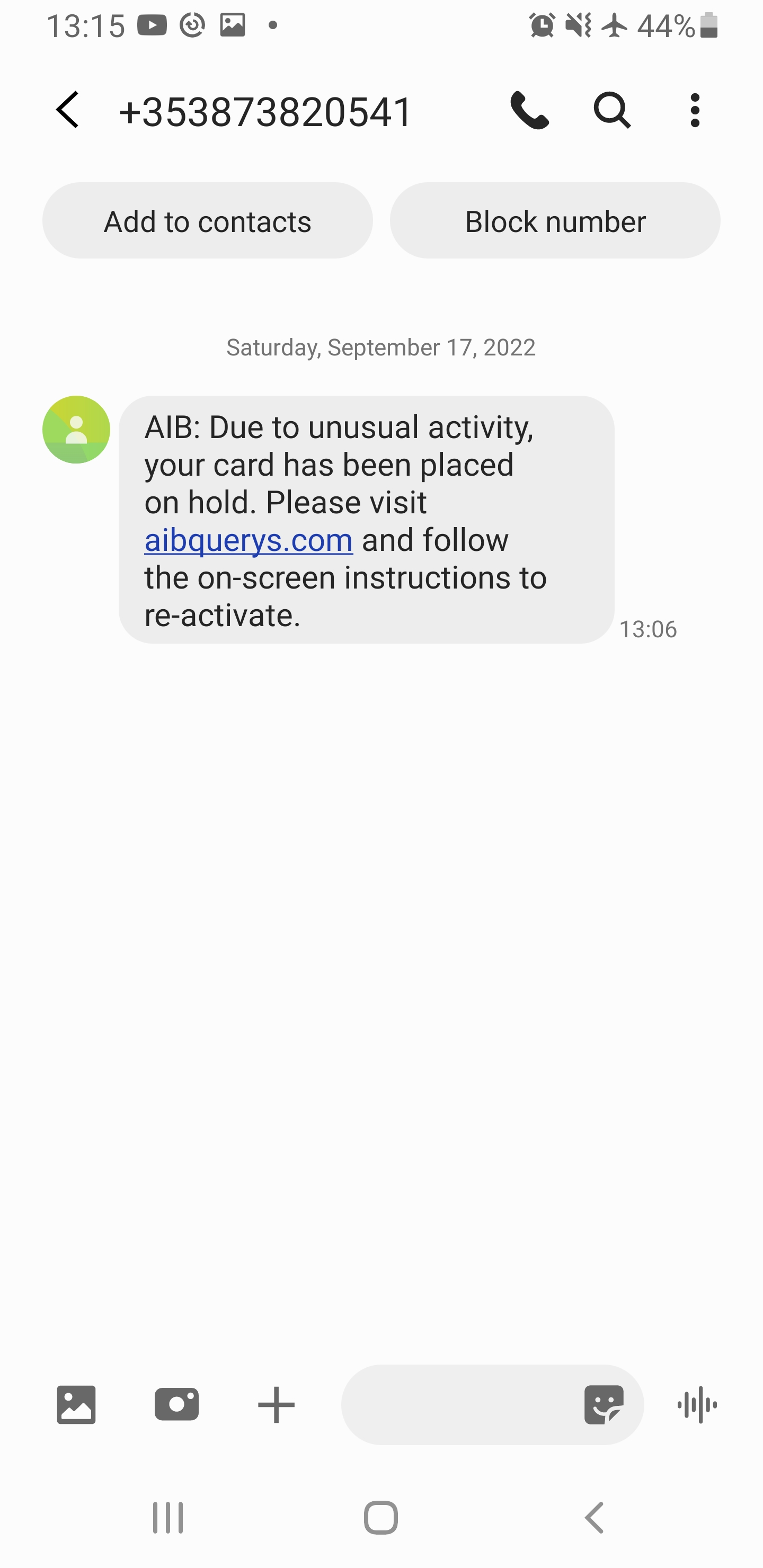

Seguretat

- Desactivar sempre el Bluetooth del mòbil, de l’ordinador i del cotxe quan no el fem servir.

- No només estalviarem bateria, també ens podem estalviar algun hackeig.

- Utilitzar auriculars amb cable és molt més segur.

- Un d’aquests casos de multinacionals assetjant brutalment persones innocents

- Una història d’executius d’eBay enviats a presó per una campanya de bullying contra persones que publicaven una revista, incloent amenaces, enviament de porcs morts, etc…

Trucs

- Desactivar el Caller ID

- En Android:

Step 1: On the Home Screen, tap Phone.

Step 2: Press the left menu button and tap Settings.

Step 3: Under Call settings, tap Supplementary services.

Step 4: Tap Caller ID to turn it on or off.

Entreteniment

Videojocs

Nerd Culture

Internet / Societat

Actualitat

Trucs

- Si s’us omple el mòbil, podeu posar una tarja micro SD, que val uns 20€ per a una de 64GB/128GB.

- També podeu passar les fotos a l’ordinador, o a un disc dur extern.

- Fer una captura de pantalla:

Windows

- Impr Pant, Alt Impr i enganxem a una aplicació com Paint o GIMP

Linux

- Impr Pant, Alt Impr Pant, Shift Impr

Android:

- Cerca a Google per al teu model.

- Prem tecla de baixar el volum i d’encendre el mòbil a la mateixa hora. I mantent-los pulsats durant mig segon.

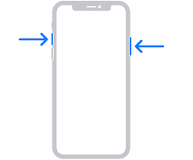

iPhone:

- 13 i altres amb face id: Prem: el botó del costat i el de pujar el volum a l’hora.

- Models amb touch id: Prem el botó de home, el rodonet, i el del costat (power).

- Els que tenen el botó a dalt, han de prèmer el botó de dalt i home.

- iPhone 12

https://support.apple.com/en-ie/HT200289

Dones en ciència i tecnologia

- Ada Lovelace

Trucs de Zoom

- Un Zoom és un sistema de videoconferència que s’utilitza molt en teletreball a les empreses.

- Es pot utilitzar gratuïtament, amb un límit de temps per cada trucada. Ara fa poc s’ha limitat el temps de persones individuals a 45 minuts, fins ara era il·limitat i s’emprava molt per professors, terapeutes, particulars…

- Jo uso la versió professional per a les meves classes i pago 18€ al mes i puc donar classes a 100 persones sense límit de temps.

- Es pot compartir la pantalla. I es pot demanar control remot. L’altre t’ha d’autoritzar.

- S’empra molt per a presentacions.

- També per a jugar a jocs amb amics.

- Per a arreglar l’ordinador a la tieta.

- També es pot dibuixar a la pantalla de l’atri, posar fletxes i textes. La opció es diu Annotate.

- Per a evitar problemes amb l’aúdio es recomana connectar un headset (auriculars amb micròfon). A TV3 s’ha pogut veure molta gent amb problemes d’aúdio acoplant-se per no emprar auriculars i micro.

- També es pot difuminar la imatge de fons o posar una imatge o un vídeo de fons

- Pots posar mute (Desactivar Aúdio) i parar la imatge (Detenir Vídeo)

- Es pot enregistrar vídeo. Molt útil per a classes, per a que els estudiants puguin tornar a repassar la lliçó després.

Trucs de Mòbils

Copiar i Enganxar:

- És possible seleccionar text, copiar i enganxar apretant amb el dit sobre el text durant dos o tres segons. Un menu se’ns obrirà i també dues boletes ens indicaran el principi i el final del text seleccionat.

Trucs per a trobar feina

- Estudiar una carrera

- Es pot fer de tardes, remotament, per Internet

- S’aprèn molt

- Es fan bons contactes

- Alguns govers (Irlanda, Escòcia…) te la financen/subsidien.

- Estudiar un curs de programació

- La Generalitat de Catalunya fa cursos gratuïts per a aturats

Temes proposats per a següents programes

- La importància de LinkedIn en la estratègia per a trobar feina. Trucs i consells.

- Aprèn a programar i canvia la teva trajectoria laboral. Trucs, consells, històries d’èxit.

- Trucs per a utilitzar programes més eficientment:

- Cercar textes dins pàgines webs i documents

- Copiar, enganxar

- Utilitzar google docs per a treballar en un document conjuntament

- Compartir arxius i vídeos amb Google Drive

- Ergonomia, com usar un monitor extern, teclat i ratolí, pot fer desaparèixer el mal de coll. Emprar llum addient i una bona cadira.

- La importància de les còpies de seguretat. Tenir les còpies distribuïdes geogràficament per estratègies de disaster and recovery.

- Com alliberar espai al mòbil. Passar fotos a una tarja SD o a l’ordinador. Arxius que guarda Whatsapp i mai allibera.

- Com emprar negreta, cursiva, i marcar un bloc de codi a WhatsApp.

- L’experiència dels estudiants a la universitat i masters, costos, països que subvencionen.

- Irlanda

- Escòcia

- Resolució de preguntes. Envia la teva pregunta a l’equip del programa i la resoldrem en un proper programa.

Programes anteriors

Programa anterior: RAB El nou món digital del Dilluns 24 d’Octubre de 2022 [CA]

Tots els programes: RAB