I show some tips and tricks.

Author Archives: Carles Mateo

Install Ubuntu 26.04 LTS Desktop in VirtualBox

A step by step guide, with some tricks and troubleshot.

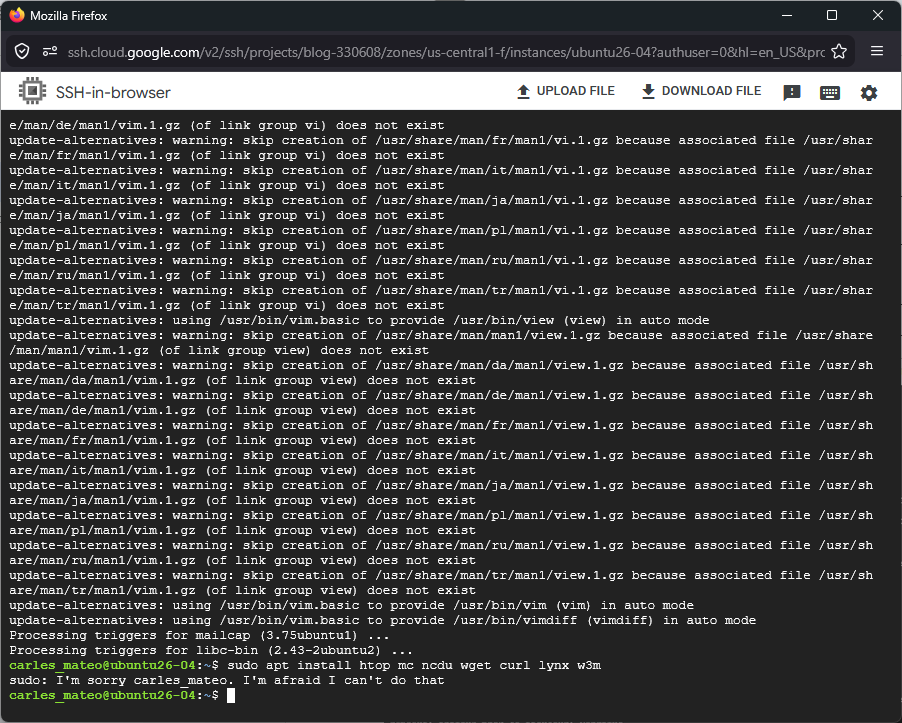

Sudo problems in Ubuntu 26.04 LTS with Google Cloud: I’m sorry user. I’m afraid I can’t do that

I show in the video, how briefly after using sudo, it stops working.

I did this proof of concept and, I got the same problem:

sleep 300 && sudo cat /etc/lsb-release

Checking with:

id -nG

clearly showed that my user is part of google-sudoers But then:

journalctl -u google-guest-agent -f

Displays:

Apr 26 17:54:07 ubuntu26-04 google_guest_agent[851]: Adding existing user carles_mateo to google-sudoers group.

Apr 26 17:54:07 ubuntu26-04 gpasswd[89648]: user carles_mateo added by root to group google-sudoers

Apr 26 17:54:07 ubuntu26-04 google_guest_agent[851]: Updating keys for user carles_mateo.

Apr 26 17:54:11 ubuntu26-04 google_guest_agent[851]: Updating keys for user carles_mateo.

Apr 26 17:57:11 ubuntu26-04 google_guest_agent[851]: ERROR non_windows_accounts.go:219 invalid ssh key entry - expired key: carles_mateo:...google-ssh {"userName":"carles.mateo@gmail.com","expireOn":"2026-04-26T17:57:05+0000"}

Apr 26 17:57:11 ubuntu26-04 google_guest_agent[851]: ERROR non_windows_accounts.go:219 invalid ssh key entry - expired key: carles_mateo:ssh-rsa...

Apr 26 17:57:11 ubuntu26-04 google_guest_agent[851]: Removing user carles_mateo.

Apr 26 17:57:11 ubuntu26-04 gpasswd[89736]: user carles_mateo removed by root from group google-sudoersInstalling Ubuntu 26.04 LTS in Google Cloud Compute Engine

The video shows step by step how to create an Instance in Google Cloud Compute Engine of the type e2, increase the size of the disk, and install Ubuntu 26.04 LTS Server.

Also shows how the new htop looks, with new IO options.

You know that utilites from coreutils have been rewriten in Rust, like sudo. I was wondering if it would work well. I thoguht I was encountering the first problems, after I experienced that when launched sudo, like in example, a sudo apt install package , sudo then stops working and I’ve to exit the shell and relogin.

I found that it is Google Cloud that removes my user from google-sudoers after 3 minutes.

Solving silent exit error on eZ Launchpad

You have installed eZ Launchpad, and you can execute the binary ez from your home folder or other paths, however when you execute it from a project folder you cloned with git (with its .platform.app.yaml file) ez returns to prompt without any error message.

The exit code is 255, but even if you strace the process you don’t find the exact problem.

Inside your project you run ez without any argument in a clean install of Ubuntu 24.04 LTS with PHP 8.3, or with PHP 8.4, without xDebug, without opcache, without memory limit… nothing works with no visible error message in the logs or in the error output. However if you run it outside the project folder, it works, and it displays the typical help messages.

I reproduced this behavior on several Ubuntu computers. The fix I found is to execute ez with PHP 8.1

You can install PHP8.1 from ondrej repository, then you can update alternates to execute PHP 8.1 by default in your system, or you create the project by invoking ez with PHP 8.1 explicitly with:

php8.1 ~/ez create

This will kickstart the creation of your ez project based on Docker containers.

RAB El nou món digital 2022-11-14 [CA]

| Aquest és el guió per al proper programa El nou món digital a Ràdio Amèrica Barcelona, que s’emet els Dilluns a les 10:30 Ireland Time / 11:30 Zona horària Catalunya / 02:30 Pacific Time. Disclaimer: Treballo per a Activision Blizzard. Totes les opinions són meves i no representen cap companyia. This is the excerpt of my radio program at Radio America Barcelona that airs on Mondays 10:30 Irish Time / 11:30 Catalonia Time / 02:30 Pacific Time. Disclaimer: I work for Activision Blizzard. Opinions are my own. My opinions do not represent any company. |

Aquesta és la pàgina del proper programa de la setmana del 14 de Novembre de 2022.

This is the page of the upcoming program of 14th November’s 2022 week.

Actualitat

- Meta (Facebook) farà fora 11,000 treballadors a tot al món i aturarà inversions en algunes àrees.

- Sembla ser que experts consideraven que el creixement econòmic durant la pandèmia es mantindria i no ha estat així. Ja han començat a acomiadar.

- https://www.engadget.com/meta-mass-layoffs-facebook-111406126.html

- Twitter també farà fora 13,000 empleats a tot el món.

- També ha fet fora entre 4,500 i 5,500 contractors.

- Sembla ser que la companyia estava en pèrdues i aquests acomiadaments intenten reduïr costs.

- https://mashable.com/article/elon-musk-fires-more-twitter-workers

- Fallides en criptomoneda. FTX, un dels principals actors en la compra venda de criptomonedes ha fet fallida.

- https://www.engadget.com/binance-abandons-ftx-rescue-bid-222642965.html

Entreteniment

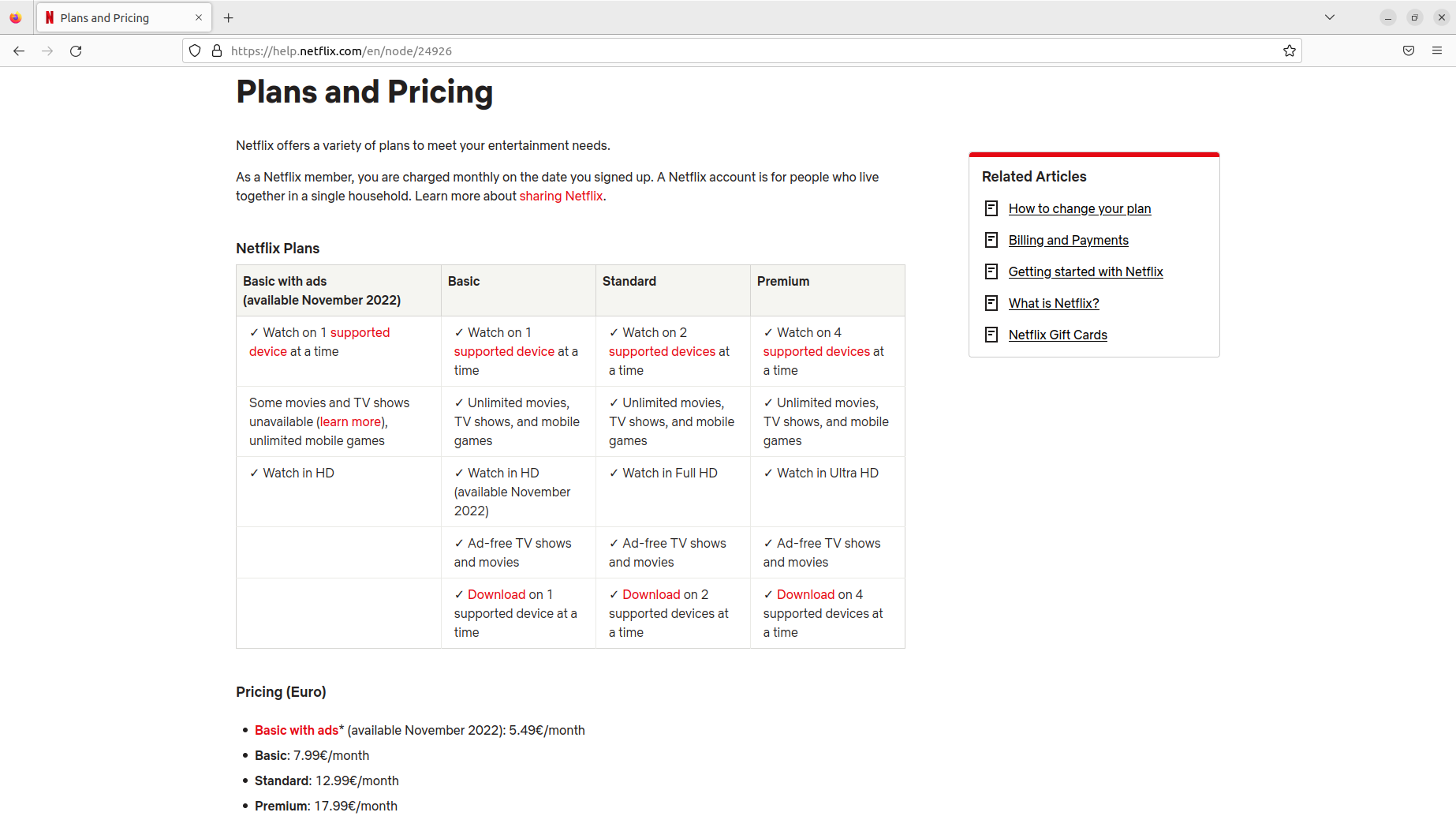

- Netflix ha creat un joc de trivial, interactiu, amb preguntes i respostes, que pot jugar una persona sola o dues, una contra d’altra, per torns.

- https://about.netflix.com/en/news/introducing-triviaverse-new-trivia-experience

Ecologia

- França obligarà per llei, els parkings de cotxes amb més de 80 places, a instal·lar panells solars.

- Actualment França general el 25% de la seva energia de fonts renovables, per sota dels seus veïns europeus.

- https://www.engadget.com/france-new-law-parking-lots-solar-panels-100435214.html

Trucs de telèfons

- Un truc per a seleccionar un text, o un pàrraf, d’un text, al mòbil. Es tracta de prèmer amb el dit sobre la pantalla durant dos segons, sobre la paraula que volem copiar. Llavors paraula se’ns selecciona i podem Copiar i Enganxar, o fer d’altres accions com cercar-la a google. També podrem estirar unes boletes que delimiten el text per a cercar per a un pàrraf més gran.

Per a següents programes…

Seguretat

- Desactivar sempre el Bluetooth del mòbil, de l’ordinador i del cotxe quan no el fem servir.

- No només estalviarem bateria, també ens podem estalviar algun hackeig.

- Utilitzar auriculars amb cable és molt més segur.

- Un d’aquests casos de multinacionals assetjant brutalment persones innocents

- Una història d’executius d’eBay enviats a presó per una campanya de bullying contra persones que publicaven una revista, incloent amenaces, enviament de porcs morts, etc…

Trucs

- Desactivar el Caller ID

- En Android:

Step 1: On the Home Screen, tap Phone.

Step 2: Press the left menu button and tap Settings.

Step 3: Under Call settings, tap Supplementary services.

Step 4: Tap Caller ID to turn it on or off.

Entreteniment

Videojocs

Nerd Culture

Internet / Societat

Actualitat

Trucs

- Si s’us omple el mòbil, podeu posar una tarja micro SD, que val uns 20€ per a una de 64GB/128GB.

- També podeu passar les fotos a l’ordinador, o a un disc dur extern.

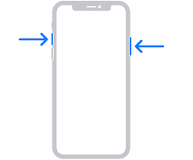

- Fer una captura de pantalla:

Windows

- Impr Pant, Alt Impr i enganxem a una aplicació com Paint o GIMP

Linux

- Impr Pant, Alt Impr Pant, Shift Impr

Android:

- Cerca a Google per al teu model.

- Prem tecla de baixar el volum i d’encendre el mòbil a la mateixa hora. I mantent-los pulsats durant mig segon.

iPhone:

- 13 i altres amb face id: Prem: el botó del costat i el de pujar el volum a l’hora.

- Models amb touch id: Prem el botó de home, el rodonet, i el del costat (power).

- Els que tenen el botó a dalt, han de prèmer el botó de dalt i home.

- iPhone 12

https://support.apple.com/en-ie/HT200289

Dones en ciència i tecnologia

- Ada Lovelace

Trucs de Zoom

- Un Zoom és un sistema de videoconferència que s’utilitza molt en teletreball a les empreses.

- Es pot utilitzar gratuïtament, amb un límit de temps per cada trucada. Ara fa poc s’ha limitat el temps de persones individuals a 45 minuts, fins ara era il·limitat i s’emprava molt per professors, terapeutes, particulars…

- Jo uso la versió professional per a les meves classes i pago 18€ al mes i puc donar classes a 100 persones sense límit de temps.

- Es pot compartir la pantalla. I es pot demanar control remot. L’altre t’ha d’autoritzar.

- S’empra molt per a presentacions.

- També per a jugar a jocs amb amics.

- Per a arreglar l’ordinador a la tieta.

- També es pot dibuixar a la pantalla de l’atri, posar fletxes i textes. La opció es diu Annotate.

- Per a evitar problemes amb l’aúdio es recomana connectar un headset (auriculars amb micròfon). A TV3 s’ha pogut veure molta gent amb problemes d’aúdio acoplant-se per no emprar auriculars i micro.

- També es pot difuminar la imatge de fons o posar una imatge o un vídeo de fons

- Pots posar mute (Desactivar Aúdio) i parar la imatge (Detenir Vídeo)

- Es pot enregistrar vídeo. Molt útil per a classes, per a que els estudiants puguin tornar a repassar la lliçó després.

Trucs de Mòbils

Copiar i Enganxar:

- És possible seleccionar text, copiar i enganxar apretant amb el dit sobre el text durant dos o tres segons. Un menu se’ns obrirà i també dues boletes ens indicaran el principi i el final del text seleccionat.

Trucs per a trobar feina

- Estudiar una carrera

- Es pot fer de tardes, remotament, per Internet

- S’aprèn molt

- Es fan bons contactes

- Alguns govers (Irlanda, Escòcia…) te la financen/subsidien.

- Estudiar un curs de programació

- La Generalitat de Catalunya fa cursos gratuïts per a aturats

Temes proposats per a següents programes

- La importància de LinkedIn en la estratègia per a trobar feina. Trucs i consells.

- Aprèn a programar i canvia la teva trajectoria laboral. Trucs, consells, històries d’èxit.

- Trucs per a utilitzar programes més eficientment:

- Cercar textes dins pàgines webs i documents

- Copiar, enganxar

- Utilitzar google docs per a treballar en un document conjuntament

- Compartir arxius i vídeos amb Google Drive

- Ergonomia, com usar un monitor extern, teclat i ratolí, pot fer desaparèixer el mal de coll. Emprar llum addient i una bona cadira.

- La importància de les còpies de seguretat. Tenir les còpies distribuïdes geogràficament per estratègies de disaster and recovery.

- Com alliberar espai al mòbil. Passar fotos a una tarja SD o a l’ordinador. Arxius que guarda Whatsapp i mai allibera.

- Com emprar negreta, cursiva, i marcar un bloc de codi a WhatsApp.

- L’experiència dels estudiants a la universitat i masters, costos, països que subvencionen.

- Irlanda

- Escòcia

- Resolució de preguntes. Envia la teva pregunta a l’equip del programa i la resoldrem en un proper programa.

Programes anteriors

Programa anterior: RAB El nou món digital del Dilluns 31 d’Octubre de 2022 [CA]

Tots els programes: RAB

RAB El nou món digital 2022-11-07 [CA]

| Aquest és el guió per al proper programa El nou món digital a Ràdio Amèrica Barcelona, que s’emet els Dilluns a les 10:30 Ireland Time / 11:30 Zona horària Catalunya / 02:30 Pacific Time. Disclaimer: Treballo per a Activision Blizzard. Totes les opinions són meves i no representen cap companyia. This is the excerpt of my radio program at Radio America Barcelona that airs on Mondays 10:30 Irish Time / 11:30 Catalonia Time / 02:30 Pacific Time. Disclaimer: I work for Activision Blizzard. Opinions are my own. My opinions do not represent any company. |

Aquesta és la pàgina del proper programa de la setmana del 7 de Novembre de 2022.

This is the page of the upcoming program of 7th November’s 2022 week.

Ciència

- La NASA ha enviat a l’espai una impressora 3D capaç d’imprimir un menisc per a soldats ferits.

Entreteniment

- Netflix presenta Enola Holmes 2, la segona part de la història de la germana de Sherlock Holmes.

- A Disney Plus he estat veient “War of the Worlds”, un remake del classic, fet per Canal+. Està força bé. Una mica dur.

Video jocs

- Els esperats cascos de realitat virtual de Sony per a la consola PlayStation 5,el VR 2, arriben el 22 de Febrer. Costaran $550 dolars als Estats Units, a Europa costaran 600 euros.

- Els comandaments tenen control de moviment i el casc eye-tracking

Trucs treballant amb portàtil

- Fer una captura de pantalla:

Windows

- Impr Pant, Alt Impr i enganxem a una aplicació com Paint o GIMP

Linux

- Impr Pant, Alt Impr Pant, Shift Impr

Trucs de telefonia

Captura de pantalla en Android:

- Cerca a Google per al teu model.

- Prem tecla de baixar el volum i d’encendre el mòbil a la mateixa hora. I mantent-los pulsats durant mig segon.

iPhone:

- 13 i altres amb face id: Prem: el botó del costat i el de pujar el volum a l’hora.

- Models amb touch id: Prem el botó de home, el rodonet, i el del costat (power).

- Els que tenen el botó a dalt, han de prèmer el botó de dalt i home.

- iPhone 12

https://support.apple.com/en-ie/HT200289

Gadgets

- L’empresa Sound burger ha tret una nova versió del seu toca discos portable.

- El seu preu és de 229 euros.

- https://www.engadget.com/audio-technica-2022-sound-burger-announced-130041048.html

Per a següents programes…

Seguretat

- Desactivar sempre el Bluetooth del mòbil, de l’ordinador i del cotxe quan no el fem servir.

- No només estalviarem bateria, també ens podem estalviar algun hackeig.

- Utilitzar auriculars amb cable és molt més segur.

- Un d’aquests casos de multinacionals assetjant brutalment persones innocents

- Una història d’executius d’eBay enviats a presó per una campanya de bullying contra persones que publicaven una revista, incloent amenaces, enviament de porcs morts, etc…

Trucs

- Desactivar el Caller ID

- En Android:

Step 1: On the Home Screen, tap Phone.

Step 2: Press the left menu button and tap Settings.

Step 3: Under Call settings, tap Supplementary services.

Step 4: Tap Caller ID to turn it on or off.

Entreteniment

Videojocs

Nerd Culture

Internet / Societat

Actualitat

Trucs

- Si s’us omple el mòbil, podeu posar una tarja micro SD, que val uns 20€ per a una de 64GB/128GB.

- També podeu passar les fotos a l’ordinador, o a un disc dur extern.

Dones en ciència i tecnologia

- Ada Lovelace

Trucs de Zoom

- Un Zoom és un sistema de videoconferència que s’utilitza molt en teletreball a les empreses.

- Es pot utilitzar gratuïtament, amb un límit de temps per cada trucada. Ara fa poc s’ha limitat el temps de persones individuals a 45 minuts, fins ara era il·limitat i s’emprava molt per professors, terapeutes, particulars…

- Jo uso la versió professional per a les meves classes i pago 18€ al mes i puc donar classes a 100 persones sense límit de temps.

- Es pot compartir la pantalla. I es pot demanar control remot. L’altre t’ha d’autoritzar.

- S’empra molt per a presentacions.

- També per a jugar a jocs amb amics.

- Per a arreglar l’ordinador a la tieta.

- També es pot dibuixar a la pantalla de l’atri, posar fletxes i textes. La opció es diu Annotate.

- Per a evitar problemes amb l’aúdio es recomana connectar un headset (auriculars amb micròfon). A TV3 s’ha pogut veure molta gent amb problemes d’aúdio acoplant-se per no emprar auriculars i micro.

- També es pot difuminar la imatge de fons o posar una imatge o un vídeo de fons

- Pots posar mute (Desactivar Aúdio) i parar la imatge (Detenir Vídeo)

- Es pot enregistrar vídeo. Molt útil per a classes, per a que els estudiants puguin tornar a repassar la lliçó després.

Trucs de Mòbils

Copiar i Enganxar:

- És possible seleccionar text, copiar i enganxar apretant amb el dit sobre el text durant dos o tres segons. Un menu se’ns obrirà i també dues boletes ens indicaran el principi i el final del text seleccionat.

Trucs per a trobar feina

- Estudiar una carrera

- Es pot fer de tardes, remotament, per Internet

- S’aprèn molt

- Es fan bons contactes

- Alguns govers (Irlanda, Escòcia…) te la financen/subsidien.

- Estudiar un curs de programació

- La Generalitat de Catalunya fa cursos gratuïts per a aturats

Temes proposats per a següents programes

- La importància de LinkedIn en la estratègia per a trobar feina. Trucs i consells.

- Aprèn a programar i canvia la teva trajectoria laboral. Trucs, consells, històries d’èxit.

- Trucs per a utilitzar programes més eficientment:

- Cercar textes dins pàgines webs i documents

- Copiar, enganxar

- Utilitzar google docs per a treballar en un document conjuntament

- Compartir arxius i vídeos amb Google Drive

- Ergonomia, com usar un monitor extern, teclat i ratolí, pot fer desaparèixer el mal de coll. Emprar llum addient i una bona cadira.

- La importància de les còpies de seguretat. Tenir les còpies distribuïdes geogràficament per estratègies de disaster and recovery.

- Com alliberar espai al mòbil. Passar fotos a una tarja SD o a l’ordinador. Arxius que guarda Whatsapp i mai allibera.

- Com emprar negreta, cursiva, i marcar un bloc de codi a WhatsApp.

- L’experiència dels estudiants a la universitat i masters, costos, països que subvencionen.

- Irlanda

- Escòcia

- Resolució de preguntes. Envia la teva pregunta a l’equip del programa i la resoldrem en un proper programa.

Programes anteriors

Programa anterior: RAB El nou món digital del Dilluns 31 d’Octubre de 2022 [CA]

Tots els programes: RAB

RAB El nou món digital 2022-10-31 [CA]

| Aquest és el guió per al proper programa El nou món digital a Ràdio Amèrica Barcelona, que s’emet els Dilluns a les 10:30 Ireland Time / 11:30 Zona horària Catalunya / 02:30 Pacific Time. Disclaimer: Treballo per a Activision Blizzard. Totes les opinions són meves i no representen cap companyia. This is the excerpt of my radio program at Radio America Barcelona that airs on Mondays 10:30 Irish Time / 11:30 Catalonia Time / 02:30 Pacific Time. Disclaimer: I work for Activision Blizzard. Opinions are my own. My opinions do not represent any company. |

Aquesta és la pàgina del proper programa de la setmana del 31 d’Octubre de 2022.

This is the page of the upcoming program of 31th October’s 2022 week.

Actualitat

- Elon Musk ja és oficialment propietari de Twitter.

- I ja ha anunciat acomiadaments.

- https://www.engadget.com/elon-musk-reportedly-orders-layoffs-at-twitter-221121536.html

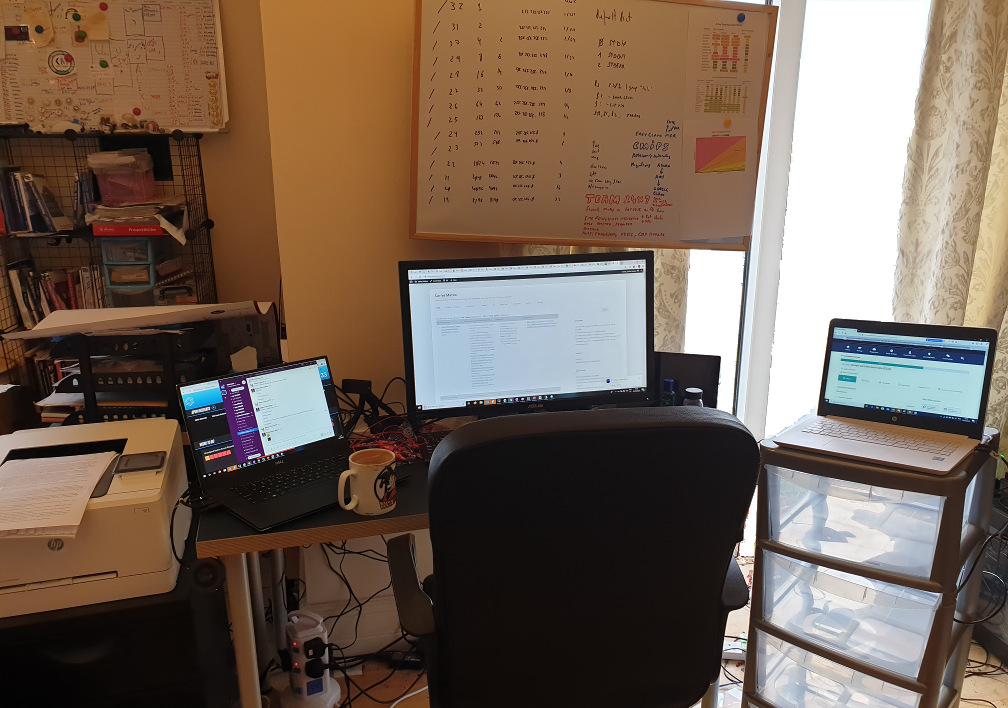

Trucs treballant amb portàtil

- Un truc molt senzill: si treballeu força hores amb el portàtil, us recomano que us compreu un monitor extern, un teclat i un ratolí.

- El vostre coll, la vostra esquena, i la vostra visió us ho agrairan. :)

- Un monitor de 22″ Full HD o superior us hauria d’anar séper bé.

Trucs de telefonia

- El proppassat 25 d’Octubre WhatsApp va patir caigudes a tot el món.

- Us suggereixo que tingueu una alternativa per si WhatsApp cau. Per exemple Telegram.

- Per a estalviar bateria del mòbil sense perdre prestacions, quan no l’hem d’utilitzar podem desactivar el Bluetooth i el Wifi.

- També podem activar una opció anomenada Estalvi d’Energia o Power saving, que redueix la brillantor de la pantalla entre d’altres optimitzacions que faran que la nostra bateria doni molt més de sí.

- Pots escoltar els Whatsapps sense que els hagi d’escoltar ningú més, simplement donant-li al botó de play i posant-te el mòbil a la orella com si parlessis.

- Els mòbils tenen uns sensors que detecten quan el mòbil és contra una superfície.

- Bloquegeu sempre la pantalla abans de guardar el mòbil a la butxaca (per a evitar que s’activin coses sense voler)

- Les noves tendències són carregar el mòbil cada cop més ràpid. El nou model de Xiaomi Redmi Note 12 carrega la bateria de 4.300mAh en 9 minuts.

- El model 120W Hypercharge necessita 15 minuts

- Igual que el 150W SuperVOOC d’Oppo

- Són mòbils amb unes prestacions excel·lents sobre els USD $300

- https://www.engadget.com/redmi-note-12-discovery-edition-210w-hypercharge-064020788.html

Vehicles elèctrics

- La Unió Europea està treballant en prohibir la venda de cotxes i furgonetes de benzina a partir del 2035.

Entreteniment

- Apple ha apujat el preu de Apple Music and Apple TV+

- Netflix estrenarà el 3 de Novembre el seu mode de visualització, més econòmic, amb anuncis, per 5,49 € al mes a Catalunya

- Les ulleres de realitat virtual de Sony Playstation 5, les VR2, prometen realment ser molt divertides.

- Altres companyies com Facebook / Meta estan treballant en oferir experiències de realitat virtual molt positives i estan creant cascos de realitat virtual més potents i assequibles. (Meta va comprar Oculus i ara creen cascos de realitat virtual)

Mac

- El nou Apple iPad pro, amb el xip M2, ha estat analitzat per diversos experts, i en parlen molt molt bé. Especialment de la velocitat d’execució que te.

- Fins i tot editar vídeo a 8K.

Pel·lícules / Sèries

- No és nova, però si us va agradar Karate Kid, us recomano la sèrie Cobra Kai, de Netflix, per a veure en família.

Per a següents programes…

Seguretat

- Desactivar sempre el Bluetooth del mòbil, de l’ordinador i del cotxe quan no el fem servir.

- No només estalviarem bateria, també ens podem estalviar algun hackeig.

- Utilitzar auriculars amb cable és molt més segur.

- Un d’aquests casos de multinacionals assetjant brutalment persones innocents

- Una història d’executius d’eBay enviats a presó per una campanya de bullying contra persones que publicaven una revista, incloent amenaces, enviament de porcs morts, etc…

Trucs

- Desactivar el Caller ID

- En Android:

Step 1: On the Home Screen, tap Phone.

Step 2: Press the left menu button and tap Settings.

Step 3: Under Call settings, tap Supplementary services.

Step 4: Tap Caller ID to turn it on or off.

Entreteniment

Videojocs

Nerd Culture

Internet / Societat

Actualitat

Trucs

- Si s’us omple el mòbil, podeu posar una tarja micro SD, que val uns 20€ per a una de 64GB/128GB.

- També podeu passar les fotos a l’ordinador, o a un disc dur extern.

- Fer una captura de pantalla:

Windows

- Impr Pant, Alt Impr i enganxem a una aplicació com Paint o GIMP

Linux

- Impr Pant, Alt Impr Pant, Shift Impr

Android:

- Cerca a Google per al teu model.

- Prem tecla de baixar el volum i d’encendre el mòbil a la mateixa hora. I mantent-los pulsats durant mig segon.

iPhone:

- 13 i altres amb face id: Prem: el botó del costat i el de pujar el volum a l’hora.

- Models amb touch id: Prem el botó de home, el rodonet, i el del costat (power).

- Els que tenen el botó a dalt, han de prèmer el botó de dalt i home.

- iPhone 12

https://support.apple.com/en-ie/HT200289

Dones en ciència i tecnologia

- Ada Lovelace

Trucs de Zoom

- Un Zoom és un sistema de videoconferència que s’utilitza molt en teletreball a les empreses.

- Es pot utilitzar gratuïtament, amb un límit de temps per cada trucada. Ara fa poc s’ha limitat el temps de persones individuals a 45 minuts, fins ara era il·limitat i s’emprava molt per professors, terapeutes, particulars…

- Jo uso la versió professional per a les meves classes i pago 18€ al mes i puc donar classes a 100 persones sense límit de temps.

- Es pot compartir la pantalla. I es pot demanar control remot. L’altre t’ha d’autoritzar.

- S’empra molt per a presentacions.

- També per a jugar a jocs amb amics.

- Per a arreglar l’ordinador a la tieta.

- També es pot dibuixar a la pantalla de l’atri, posar fletxes i textes. La opció es diu Annotate.

- Per a evitar problemes amb l’aúdio es recomana connectar un headset (auriculars amb micròfon). A TV3 s’ha pogut veure molta gent amb problemes d’aúdio acoplant-se per no emprar auriculars i micro.

- També es pot difuminar la imatge de fons o posar una imatge o un vídeo de fons

- Pots posar mute (Desactivar Aúdio) i parar la imatge (Detenir Vídeo)

- Es pot enregistrar vídeo. Molt útil per a classes, per a que els estudiants puguin tornar a repassar la lliçó després.

Trucs de Mòbils

Copiar i Enganxar:

- És possible seleccionar text, copiar i enganxar apretant amb el dit sobre el text durant dos o tres segons. Un menu se’ns obrirà i també dues boletes ens indicaran el principi i el final del text seleccionat.

Trucs per a trobar feina

- Estudiar una carrera

- Es pot fer de tardes, remotament, per Internet

- S’aprèn molt

- Es fan bons contactes

- Alguns govers (Irlanda, Escòcia…) te la financen/subsidien.

- Estudiar un curs de programació

- La Generalitat de Catalunya fa cursos gratuïts per a aturats

Temes proposats per a següents programes

- La importància de LinkedIn en la estratègia per a trobar feina. Trucs i consells.

- Aprèn a programar i canvia la teva trajectoria laboral. Trucs, consells, històries d’èxit.

- Trucs per a utilitzar programes més eficientment:

- Cercar textes dins pàgines webs i documents

- Copiar, enganxar

- Utilitzar google docs per a treballar en un document conjuntament

- Compartir arxius i vídeos amb Google Drive

- Ergonomia, com usar un monitor extern, teclat i ratolí, pot fer desaparèixer el mal de coll. Emprar llum addient i una bona cadira.

- La importància de les còpies de seguretat. Tenir les còpies distribuïdes geogràficament per estratègies de disaster and recovery.

- Com alliberar espai al mòbil. Passar fotos a una tarja SD o a l’ordinador. Arxius que guarda Whatsapp i mai allibera.

- Com emprar negreta, cursiva, i marcar un bloc de codi a WhatsApp.

- L’experiència dels estudiants a la universitat i masters, costos, països que subvencionen.

- Irlanda

- Escòcia

- Resolució de preguntes. Envia la teva pregunta a l’equip del programa i la resoldrem en un proper programa.

Programes anteriors

Programa anterior: RAB El nou món digital del Dilluns 24 d’Octubre de 2022 [CA]

Tots els programes: RAB

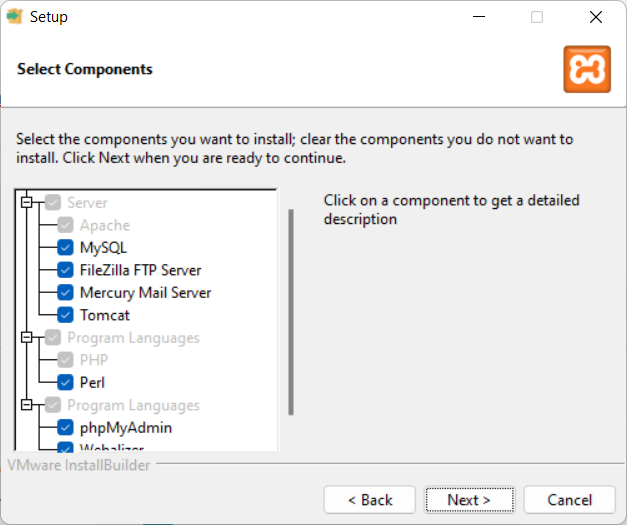

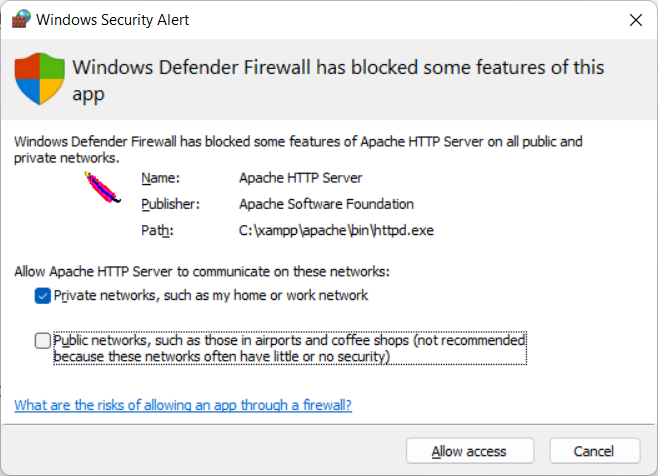

Installing PHP environment for development in Windows

This article is for my students learning PHP, or for any student that wants to learn PHP and uses a Windows computer for that.

For this we will install:

XAMPP, which is available for Windows, Mac OS and Linux.

You can download it from: https://www.apachefriends.org/

XAMPP installs together:

- Apache

- MariaDB

- PHP

- Perl

Install WAMPP instead of XAMP (if you prefer WAMPP)

Alternatively you can install WAMPP, which installs:

- Apache

- MySQL

- PHP

- PHPMyAdmin

https://www.wampserver.com/en/

Development IDE

As Development Environment we will use PHPStorm, from Jetbrains.

https://www.jetbrains.com/phpstorm/

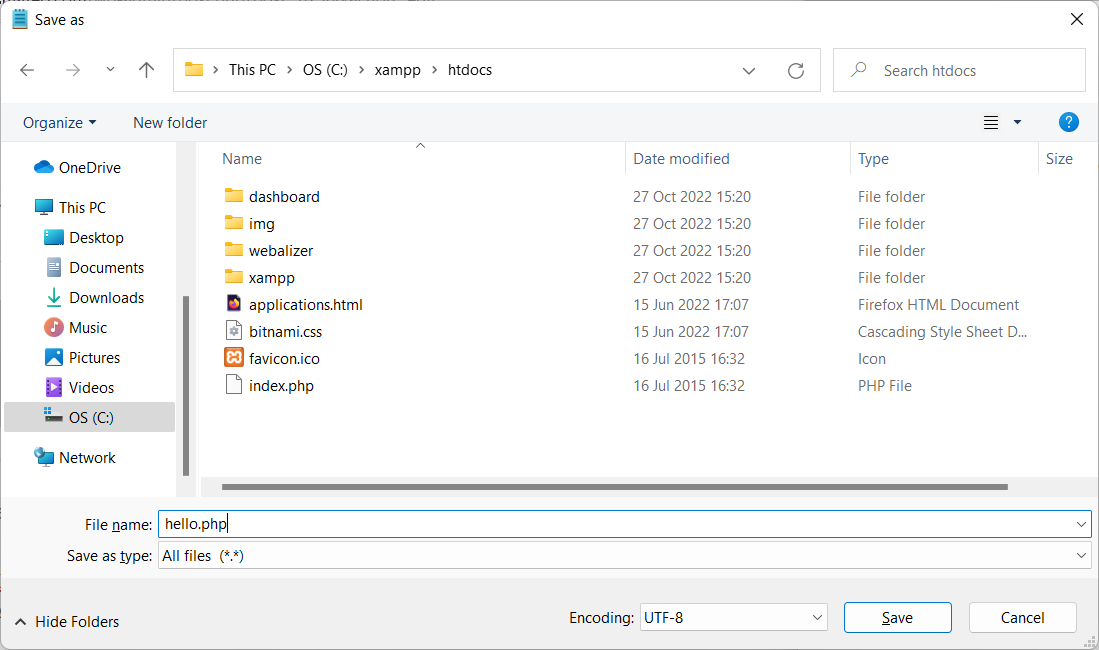

Testing the installation of XAMPP

The default directory for the PHP files is C:\xampp\htdocs

Create a file in c:\xampp\htdocs named hello.php

<?php

$s_today = date("Y-m-d");

echo "Hello! Today is ".$s_today;

?>

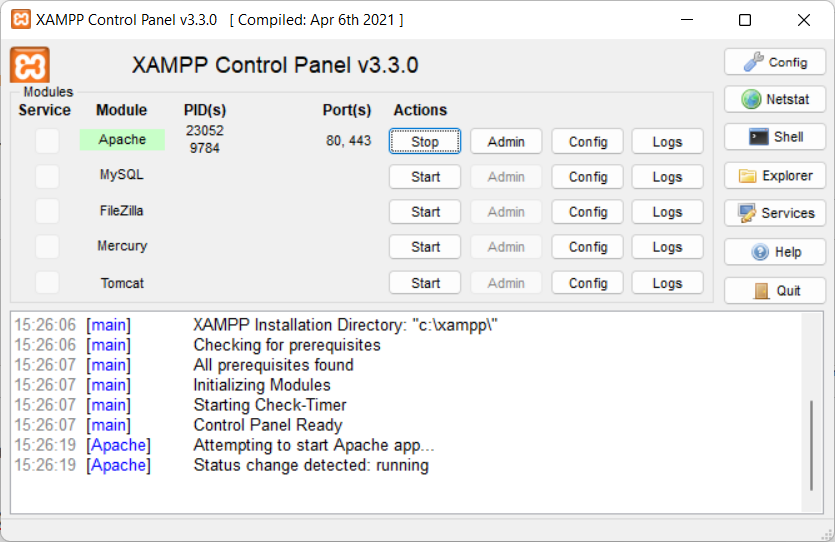

Now start Apache:

- Open the XAMPP Control Panel

- Start the Apache Server

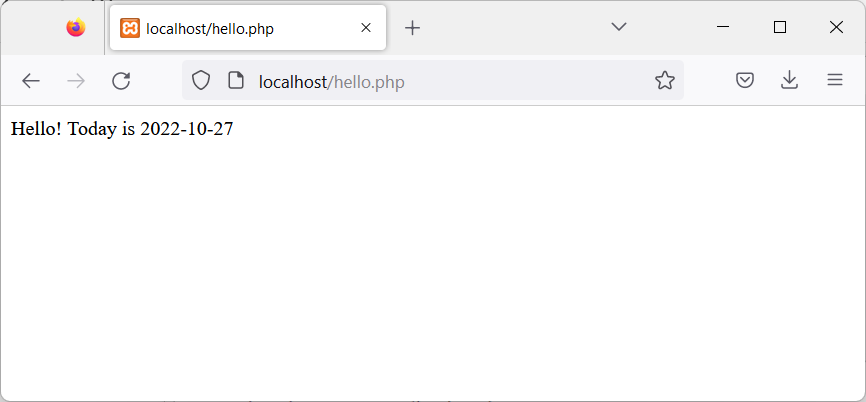

And test the new page, with the browser, opening:

http://localhost/hello.php

Backup and Restore your Ubuntu Linux Workstations

This is a mechanism I invented and I’ve been using for decades, to migrate or clone my Linux Desktops to other physical servers.

This script is focused on doing the job for Ubuntu but I was doing this already 30 years ago, for X Window as I was responsible of the Linux platform of a ISP (Internet Service Provider). So, it is compatible with any Linux Desktop or Server.

It has the advantage that is a very lightweight backup. You don’t need to backup /etc or /var as long as you install a new OS and restore the folders that you did backup. You can backup and restore Wine (Windows Emulator) programs completely and to/from VMs and Instances as well.

It’s based on user/s rather than machine.

And it does backup using the Timestamp, so you keep all the different version, modified over time. You can fusion the backups in the same folder if you prefer avoiding time versions and keep only the latest backup. If that’s your case, then replace s_PATH_BACKUP_NOW=”${s_PATH_BACKUP}${s_DATETIME}/” by s_PATH_BACKUP_NOW=”${s_PATH_BACKUP}” for instance. You can also add a folder for machine if you prefer it, for example if you use the same userid across several Desktops/Servers.

I offer you a much simplified version of my scripts, but they can highly serve your needs.

#!/usr/bin/env bash

# Author: Carles Mateo

# Last Update: 2022-10-23 10:48 Irish Time

# User we want to backup data for

s_USER="carles"

# Target PATH for the Backups

s_PATH_BACKUP="/home/${s_USER}/Desktop/Bck/"

s_DATE=$(date +"%Y-%m-%d")

s_DATETIME=$(date +"%Y-%m-%d-%H-%M-%S")

s_PATH_BACKUP_NOW="${s_PATH_BACKUP}${s_DATETIME}/"

echo "Creating path $s_PATH_BACKUP and $s_PATH_BACKUP_NOW"

mkdir $s_PATH_BACKUP

mkdir $s_PATH_BACKUP_NOW

s_PATH_KEY="/home/${s_USER}/Desktop/keys/2007-01-07-cloud23.pem"

s_DOCKER_IMG_JENKINS_EXPORT=${s_DATE}-jenkins-base.tar

s_DOCKER_IMG_JENKINS_BLUEOCEAN2_EXPORT=${s_DATE}-jenkins-blueocean2.tar

s_PGP_FILE=${s_DATETIME}-pgp.zip

# Version the PGP files

echo "Compressing the PGP files as ${s_PGP_FILE}"

zip -r ${s_PATH_BACKUP_NOW}${s_PGP_FILE} /home/${s_USER}/Desktop/PGP/*

# Copy to BCK folder, or ZFS or to an external drive Locally as defined in: s_PATH_BACKUP_NOW

echo "Copying Data to ${s_PATH_BACKUP_NOW}/Data"

rsync -a --exclude={} --acls --xattrs --owner --group --times --stats --human-readable --progress -z "/home/${s_USER}/Desktop/data/" "${s_PATH_BACKUP_NOW}data/"

rsync -a --exclude={'Desktop','Downloads','.local/share/Trash/','.local/lib/python2.7/','.local/lib/python3.6/','.local/lib/python3.8/','.local/lib/python3.10/','.cache/JetBrains/'} --acls --xattrs --owner --group --times --stats --human-readable --progress -z "/home/${s_USER}/" "${s_PATH_BACKUP_NOW}home/${s_USER}/"

rsync -a --exclude={} --acls --xattrs --owner --group --times --stats --human-readable --progress -z "/home/${s_USER}/Desktop/code/" "${s_PATH_BACKUP_NOW}code/"

echo "Showing backup dir ${s_PATH_BACKUP_NOW}"

ls -hal ${s_PATH_BACKUP_NOW}

df -h /

See how I exclude certain folders like the Desktop or Downloads with –exclude.

It relies on the very useful rsync program. It also relies on zip to compress entire folders (PGP Keys on the example).

If you use the second part, to compress Docker Images (Jenkins in this example), you will run it as sudo and you will need also gzip.

# continuation... sudo running required.

# Save Docker Images

echo "Saving Docker Jenkins /home/${s_USER}/Desktop/Docker_Images/${s_DOCKER_IMG_JENKINS_EXPORT}"

sudo docker save jenkins:base --output /home/${s_USER}/Desktop/Docker_Images/${s_DOCKER_IMG_JENKINS_EXPORT}

echo "Saving Docker Jenkins /home/${s_USER}/Desktop/Docker_Images/${s_DOCKER_IMG_JENKINS_BLUEOCEAN2_EXPORT}"

sudo docker save jenkins:base --output /home/${s_USER}/Desktop/Docker_Images/${s_DOCKER_IMG_JENKINS_BLUEOCEAN2_EXPORT}

echo "Setting permissions"

sudo chown ${s_USER}.${s_USER} /home/${s_USER}/Desktop/Docker_Images/${s_DOCKER_IMG_JENKINS_EXPORT}

sudo chown ${s_USER}.${s_USER} /home/${s_USER}/Desktop/Docker_Images/${s_DOCKER_IMG_JENKINS_BLUEOCEAN2_EXPORT}

echo "Compressing /home/${s_USER}/Desktop/Docker_Images/${s_DOCKER_IMG_JENKINS_EXPORT}"

gzip /home/${s_USER}/Desktop/Docker_Images/${s_DOCKER_IMG_JENKINS_EXPORT}

gzip /home/${s_USER}/Desktop/Docker_Images/${s_DOCKER_IMG_JENKINS_BLUEOCEAN2_EXPORT}

rsync -a --exclude={} --acls --xattrs --owner --group --times --stats --human-readable --progress -z "/home/${s_USER}/Desktop/Docker_Images/" "${s_PATH_BACKUP_NOW}Docker_Images/"

There is a final part, if you want to backup to a remote Server/s using ssh:

# continuation... to copy to a remote Server.

s_PATH_REMOTE="bck7@cloubbck11.carlesmateo.com:/Bck/Desktop/${s_USER}/data/"

# Copy to the other Server

rsync -e "ssh -i $s_PATH_KEY" -a --exclude={} --acls --xattrs --owner --group --times --stats --human-readable --progress -z "/home/${s_USER}/Desktop/data/" ${s_PATH_REMOTE}

I recommend you to use the same methodology in all your Desktops, like for example, having a data/ folder in the Desktop for each user.

You can use Erasure Code to split the Backups in blocks and store a piece in different Cloud Providers.

Also you can store your Backups long-term, with services like Amazon Glacier.

Other ideas are storing certain files in git and in Hadoop HDFS.

If you want you can CRC your files before copying to another device or server.

You will use tools like: sha512sum or md5sum.