Time ago there was a web page that was rendered in blank for certain group of users.

The errors were coming from an Oracle instance. One SysAdmin restarted the instance, but the errors continued.

Often there are problems due to having two different worlds: Development and Production/Operations.

What works in Development, or even in Docker, may not work at Scale in Production.

That query that works with 100,000 products, may not work with 10,000,000.

I have programmed a lot for web, so when I saw a blank page I knew it was an internal error as the headers sent by the Web Server indicated 500. DBAs were seeing elevated number of errors in one of the Servers.

So I went straight to the Oracle’s logs for that Servers.

I did a quick filter in bash:

cat /u01/app/oracle/diag/rdbms/world7c/world7c2/alert/log.xml | grep "ERR" -B4 -A3

This returned several errors of the kind “ORA 600 [ipc_recreate_que_2]” but this was not the error our bad guy was:

‘ORA 600 [KGL-heap-size-exceeded]’

The XML fragment was similar to this:

<msg time='2016-01-24T13:28:33.263+00:00' org_id='oracle' comp_id='rdbms'

msg_id='7725874800' type='INCIDENT_ERROR' group='Generic Internal Error'

level='1' host_id='gotham.world7c.justice.cat' host_addr='10.100.100.30'

pid='281279' prob_key='ORA 600 [KGL-heap-size-exceeded]' downstream_comp='LIBCACHE'

errid='726175' detail_path='/u01/app/oracle/diag/rdbms/world7c/world7c2/trace/world7c2_ora_281279.trc'>

<txt>Errors in file /u01/app/oracle/diag/rdbms/world7c/world7c2/trace/world7c2_ora_281279.trc (incident=726175):

ORA-00600: internal error code, arguments: [KGL-heap-size-exceeded], [0x14D22C0C30], [0], [524288008], [], [], [], [], [], [], [], []

</txt></msg>

Just before this error, there was an error with a Query, and the PID matched, so it seemed cleared to me that the query was causing the crash at Oracle level.

Checking the file:

/u01/app/oracle/diag/rdbms/world7c/world7c2/trace/world7c2_ora_281279.trc

The content was something like this:

<msg time='2016-01-24T13:28:33.263+00:00' org_id='oracle' comp_id='rdbms'

msg_id='7725874800' type='INCIDENT_ERROR' group='Generic Internal Error'

level='1' host_id='gotham.world7c.justice.cat' host_addr='10.100.100.30'

pid='281279' prob_key='ORA 600 [KGL-heap-size-exceeded]' downstream_comp='LIBCACHE'

errid='726175' detail_path='/u01/app/oracle/diag/rdbms/world7c/world7c2/trace/world7c2_ora_281279.trc'>

<txt>Errors in file /u01/app/oracle/diag/rdbms/world7c/world7c2/trace/world7c2_ora_281279.trc (incident=726175):

ORA-00600: internal error code, arguments: [KGL-heap-size-exceeded], [0x14D22C0C30], [0], [524288008], [], [], [], [], [], [], [], []

</txt>

</msg>

Basically in our case, the query that was launched by the BackEnd was using more memory than allowed, which caused Oracle to kill it.

That is a tunnable that you can modify introduced in Oracle 10g.

You can see the current values first:

SQL> select

2 nam.ksppinm NAME,

3 nam.ksppdesc DESCRIPTION,

4 val.KSPPSTVL

5 from

6 x$ksppi nam,

7 x$ksppsv val

8 where nam.indx = val.indx and nam.ksppinm like '%kgl_large_heap_%_threshold%';

NAME | DESCRIPTION | KSPPSTVL

=============================================================================================

_kgl_large_heap_warning_threshold | maximum heap size before KGL | 4194304

writes warnings to the alert log

---------------------------------------------------------------------------------------------

_kgl_large_heap_assert_threshold | maximum heap size before KGL | 4194304

raises an internal error

So, _kgl_large_heap_warning_threshold is the maximum heap before getting a warning, and _kgl_large_heap_assert_threshold is the maximum heap before getting the error.

Depending in your case the solution can be either:

- Breaking your query in several to reduce the memory used

- Use paginating or LIMIT

- Set a bigger value for those tunnables.

It will work setting 0 for these to variables, although I don’t recommend it to you, as you want your Server to kill queries that are taking more memory than you want.

To increase the value of , you have to update it. Please note it is in bytes, so for 32MB is 32 * 1024 * 1024, so 33,554,432, and using spfile:

SQL> alter system set "_kgl_large_heap_warning_threshold"=33554432

scope=spfile ;

SQL> shutdown immediate

SQL> startup

SQL> show parameter _kgl_large_heap_warning_threshold

NAME TYPE VALUE

==================================|=========|===============

_kgl_large_heap_warning_threshold | integer | 33554432

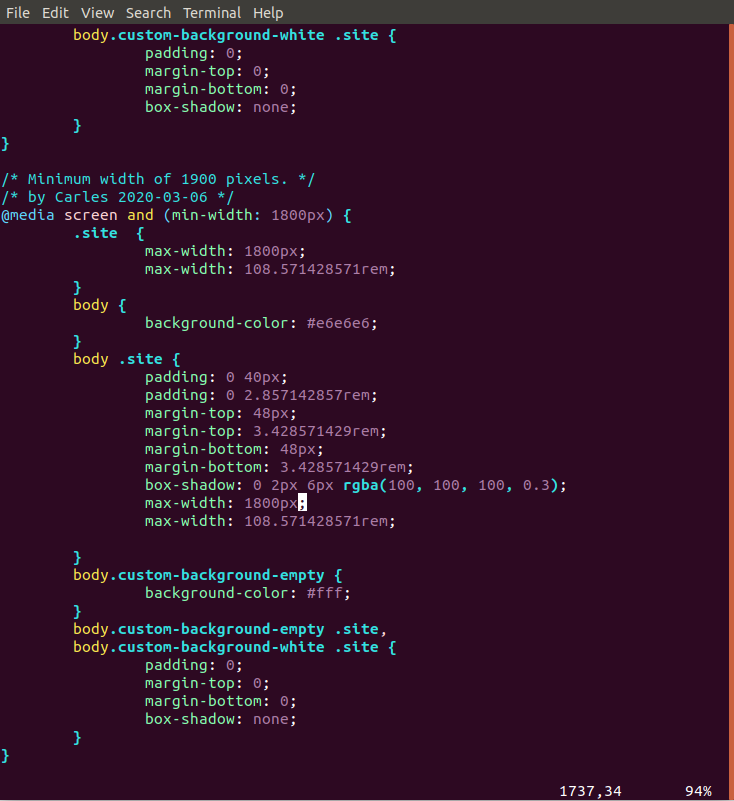

Or if using the parameter file, set:

_kgl_large_heap_warning_threshold=33554432